Can AI Agents Truly Run Unsupervised? The Honest Tech Reality

Can AI Agents Truly Run Unsupervised? The Honest Tech Reality

The hype surrounding Agentic AI is reaching a fever pitch. We’re seeing headlines like "AI Agents will replace software engineers" and "Autonomous agents navigating the complex web on their own."

But as developers and tech enthusiasts, we know that the real world isn't a sandbox. When we talk about "unsupervised" runtimes, we have to separate the science fiction from the current limitations of Large Language Models (LLMs).

Let's break down exactly what AI agents can autonomously manage, and where the hum of the server farm actually needs a human hand on the wheel.

The Trap of the Term "Unsupervised"

First, we need to clarify what "unsupervised" means in the context of AI agents, because it's often misunderstood.

In machine learning, unsupervised learning refers to training a model on unlabeled data to find hidden structures. But for an Agent—a system that perceives the environment and takes actions—an unsupervised runtime doesn't mean "flies blind."

An agent running unsupervised requires:

- Baseline Safety Constraints: Instructions on what not to do (e.g., "Do not view adult content" or "Do not refund users who didn't buy the product").

- An Initial Goal: The agent needs to know why it was kicked off. If an AI agent wakes up with no objective, it becomes a noisy generator of hallucinations.

What They Can Do: Autonomous Tool Use

There is a distinct area where agents excel at autonomy today: Tool Use.

If you equip an agent with access to a search engine, a local file system API, or a database, you can "unstitch" its intelligence from data retrieval.

- The Scenario: An enterprise agent can autonomously query a SQL database to "find the top 5 customers with churn risk," aggregate the data, and summarize it for a report writer agent without human intervention.

- The Limit: The agent can execute the steps, but it still needs high-level supervision to ensure the goal remains aligned with business KPIs.

The Non-Negotiable Controls: Why Supervision is Still Critical

Even with the most advanced reasoning capabilities, allowing an AI agent to run completely "off-chain" presents three massive technical hurdles in the current stack.

1. The Cost of Latency

LLMs are expensive. Every time an agent thinks, "I need to look up this URL, verify it, and extract info," it burns tokens.

An agent running unsupervised for too long can rack up millions of dollars in API credits inadvertently. It might try 50 different paths to solve a tutorial puzzle before finding the right one. Without a supervisor monitoring the Token Budget or the Runtime Budget, you are gambling with company capital.

2. The Rate Limit Shark Tank

Almost every public API—whether it's OpenAI, Alpha Vantage, or weather services—has rate limits.

If an agent running unsupervised hits a rate limit, it relies on its internal knowledge to try and "reroute." However, if the API documentation changes or the new proxy endpoint it finds is invalid, the agent enters a feedback loop of error cascades. In a live environment, a supervisor is needed to intervene when 404 Not Found errors start flooding the alerts.

3. Hallucination and Moral Hazard

This is the biggest barrier. An LLM doesn't know if it's writing a code a patch for a layer-1 blockchain or a joke email.

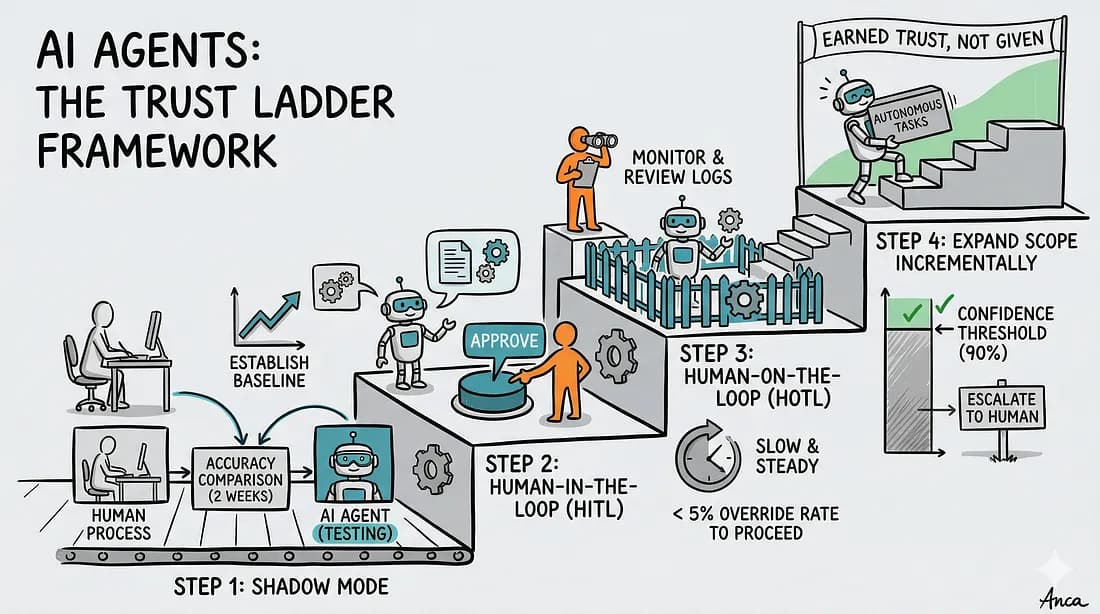

If we allow an agent to run unsupervised on execution tasks (like changing database values or sending email campaigns), we face the Moral Hazard. A "good enough" hallucination can lead to catastrophic production failures. Current architecture requires a separate "Checker" agent or a human-in-the-loop pipeline to verify actions before they are committed.

The Future: L0 to L4 Architecture

Research is heavily focused on moving models through autonomous tiers:

- L0 (No autonomy): Human plans, Human writes code.

- L1 (Task): Human outlines goal, AI executes tasks, Human reviews.

- L2 (Process): AI breaks down high-level goals, AI performs steps, Human steps in on failure.

- L3 (Job): End-to-end ownership, levels of abstraction for the human.

- L4 (Business Goal): True autonomy without the human in the loop.

Right now, we are solidly in L2. Agents are excellent at execution, but we are not ready for orchestration without supervision.

Conclusion

Will AI agents ever truly run unsupervised? Probably yes. As the reasoning models improve and the cost of running inference drops to near-zero, autonomous execution will become the norm.

But until then? Treat your AI agents as Junior Developers. They can write the unit tests, they can refactor the code, and they can run the logic loops. Just make sure you're still looking over their shoulder to ensure they didn't accidentally delete the production database because they hallucinated a syntax error.

Share This Bit