AILLM

API Gateway Latency: Why Your Real-Time Voice Agent Might Be Misconfigured

🚀 Quick Answer

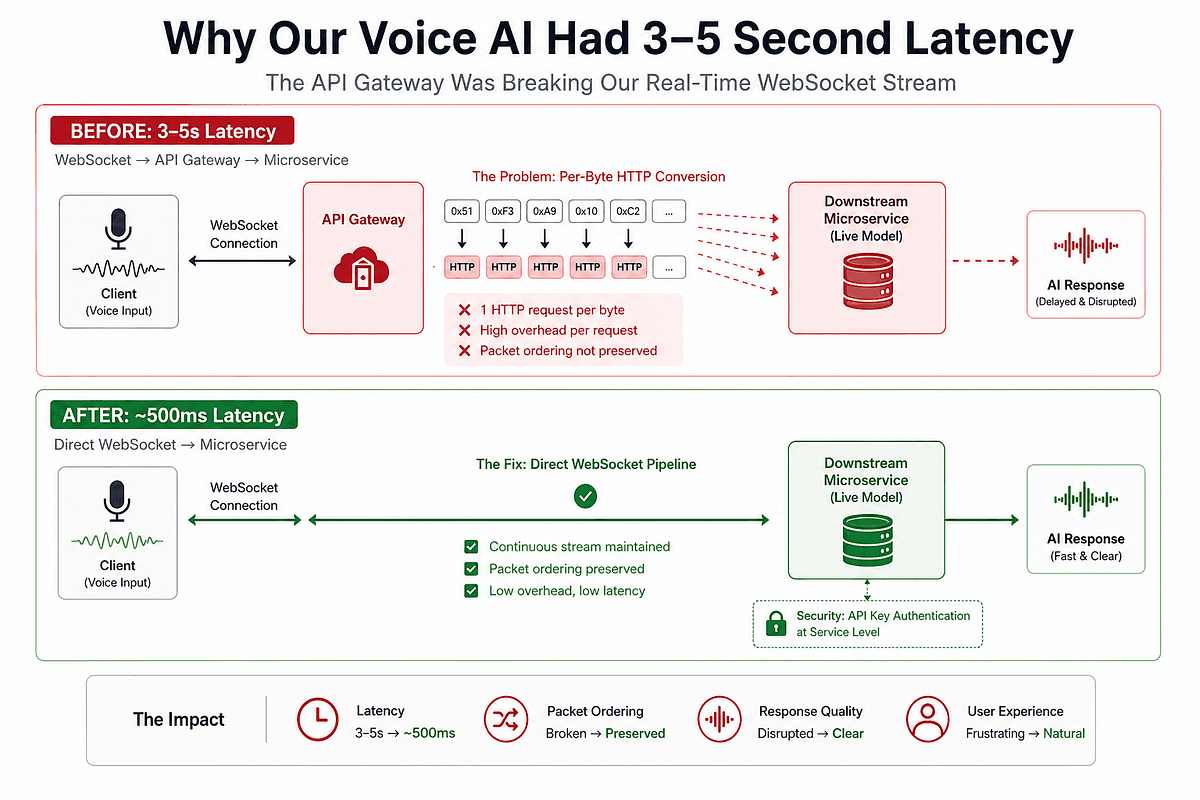

- High API Gateway latency often turns WebSocket streams into slow, out-of-order HTTP requests.

- The Problem: An API Gateway intercepting audio bytes converts them into individual HTTP requests, causing massive overhead and violating the required protobuf ordering for real-time voice models.

- The Fix: Bypass the API Gateway for WebSocket traffic or use a reverse proxy specialized in stream processing to maintain microservice security without introducing milliseconds of delay.

- The Rule: Do not apply REST-era architectural patterns to streaming protocols. Your transport layer is your real-time AI bottleneck, not your tokenizer.

🎯 Introduction

High API Gateway latency can instantly degrade your real-time voice AI experience from conversational to robotic. When we built our voice-to-voice agent, the first instinct to fix slow response times was to optimize the LLM. However, that assumption blinded us to the infrastructure layer causing the delay.

Real-time voice AI requires sub-second latency, but when we routed our audio through a standard API Gateway, we introduced 3-5 seconds of delay that had absolutely nothing to do with the model's intelligence.

In this deep dive, we’ll break down the architectural mismatch happening behind the scenes and how to fix WebSocket streaming issues without sacrificing your security posture.

🧠 Core Explanation

The core issue wasn't the AI model—it was the transport protocol layer.

Most developers assume that standard enterprise infrastructure (like API Gateways) is a "neutral switch" for data. In reality, different protocols have specific architectural requirements. When we routed a WebSocket-based streaming model (which requires bound flow control and strict packet ordering) through a REST-optimized API Gateway, we created a conflict.

The Gateway was designed for discrete HTTP requests: Go, Get Data, Return Response. But our voice agent required a continuous, ordered stream of audio packets. The Gateway couldn't handle the protocol mismatch, leading to execution overhead and packet desynchronization.

🔥 Contrarian Insight

"The moment you decide to route byte-stream protocols through a REST API Gateway, you have already engineered a latency anti-pattern into your real-time AI system."

Most engineering teams apologize for latency. I believe true engineering is identifying that the foundation of your system is fighting against the application requirements. You shouldn't scale the model to reduce latency; you should audit the transport layer first.

🔍 Deep Dive / Technical Details

The Architecture Mismatch

The mistake comes from misclassifying traffic types. The API Gateway is optimized for stateless HTTP transactions. The live multimodal model is designed for stateful, continuous sockets.

Here is what was happening inside the proxy:

- Packet Fragmentation: Netty (or similar) reads the audio stream in chunks (e.g., 128kb buffers).

- Request Injection: The Gateway creates a new HTTP

POST,GET, orCONNECTrequest for every single packet. - Overhead Accumulation: Connection handshakes, TLS termination, SSL buffers, and header parsing for hundreds of thousands of packets compound into seconds of scroll time.

- Order Violation: If packet

1is sent and takes 50ms, and packet2is sent and takes 20ms, the downstream service receives them reversed.

The Fix: Direct Pipe vs. Security Layering

We accomplished the fix by removing the gateway from the pipeline for WebSocket traffic but retaining security for the entry point.

Wrong (Latency Trap):

Client WS → API Gateway → [Parse Byte-by-Byte] → Downstream WS

Right (Streaming Optimization):

Client WS → [Direct Pipe] → Downstream WS + Gateway Handles TLS/Auth for Entry Point Only

This change reduced latency from 3–5 seconds to ~500ms. It also eliminated "broken sentences" caused by the model receiving audio input in the wrong order.

Tradeoff Analysis: Security vs. Speed

Removing the Gateway from the audio stream removes its centralized access control and rate-limiting capabilities.

The Dilemma:

- Without Gateway: Direct WebSocket connection is high-speed but requires explicit authentication logic inside the microservice.

- With Gateway: Slower, but provides unified security policies and DDoS protection.

Our Resolution: We moved API Key/Token validation to the top of the WebSocket listener (application layer security) rather than transport layer security. This maintained a direct pipe while keeping the abort-check logic close to the entry point.

🧑💻 Practical Value: How to Debug Your Latency

If you are experiencing issues in a Real-time Voice AI deployment, follow this diagnostic workflow. Do not touch tuning parameters yet.

-

Isolate the Ingress:

- Disable your API Gateway for the specific WebSocket route temporarily.

- Assert if latency drops to acceptable levels (e.g., < 500ms).

-

Audit the Protocol:

- Check if your model requires in-order delivery (protoc/proto3ns and WebSocket streams usually do).

- Check if your server framework (Node.js, Go, Java) is buffering data waiting for the next packet, or pushing it immediately.

-

Implement Protocol-Agnostic Routing:

- Most modern edge services (like Kong or Envoy) now support "strict routing" where specific paths detect WebSocket upgrades and skip the standard middleware/response-timeout logic.

⚔️ Comparison: REST vs. WebSocket Architecture

It is crucial to choose the right tool for the job. Here is why you shouldn't force WebSocket traffic into a standard REST Request/Response architecture:

| Feature | REST API (Standard Gateway) | WebSocket (Direct Stream) |

|---|---|---|

| Use Case | CRUD, Search, Loading Pages | Voice AI, Gaming, Chat |

| State | Stateless | Stateful |

| Ordering | Independent requests | Strict In-order delivery required |

| Latency Cost | Low (once per request) | Zero (continuous) |

| Protocol Suitability | REST-Era standard | AI-Era Real-time Streaming |

Winner: WebSocket for Streaming AI latency.

⚡ Key Takeaways

- Latency ≠ Intelligence: Voice agent slowness is almost always a networking issue, not a model tuning issue.

- Protocol Matters: You cannot use a REST API Gateway to proxy WebSocket byte-streams efficiently without specific streaming configurations.

- Order is Critical: Audio processing requires packets to arrive in the sequence they were sent. Any buffering in the gateway destroys the conversational quality.

- Audit First: Before optimizing inference, audit your pipeline. Check the transport layer.

- Security Trade-off: You can enforce security closer to the entry point to bypass the gateway for performance-critical streams.

🔗 Related Topics

- System Design: Building for Low-Latency AI Pipelines

- Why TCP Low Latency Matters for Interactive Voice Response

- Refactoring Monoliths: Moving from REST to GraphQL Subscriptions

🔮 Future Scope

As we move from "Chatbots" (text) to "Voice Agents" (audio), the architectural constraints will shift. We are likely to see a rise in "Streaming-Aware Load Balancers" that automatically detect WebSocket upgrade and route them to backend containers specialized in zero-copy data transfer without stopping the packet stream.

❓ FAQ

Q: Does using a CDN help with API Gateway latency? A: No. CDNs cache static assets and terminate SSL for HTTP. They are generally designed to break connections, which is counter-productive to WebSocket streaming.

Q: How does removing the gateway affect DDoS protection? A: You must implement IP whitelisting or Cloudflare/AWS Shield rules at the DNS/WAF layer before the traffic hits your application servers.

Q: What is the typical latency budget for good voice AI? A: Generally, less than 300ms end-to-end (from user speaking to AI speaking) is considered "instant." 500ms is "fast," and anything above 800ms feels robotic.

Q: Is it bad practice to use API Gateways? A: No, but it is a bad practice to force a gateway to handle all protocols. Use a WebSocket-aware gateway for streaming or bypass the gateway entirely for real-time data.

🎯 Conclusion

We fixed the 3-5 second latency not by changing the model, but by changing the network path. If you are building real-time AI, remember that the infrastructure is just as critical as the algorithm.

Audit your WebSocket paths today. The fix is usually architectural, not algorithmic.

(If you've solved the API Gateway vs. WebSocket trade-off in your production env, I'd love to hear your architecture in the comments.)

Share This Bit