AIAI AgentsAI AssistantAnthropicOpenAIClaudeChatgpt

Claude Opus 4.7 vs OpenAI Codex: The $1M Coding AI Comparison (2026)

🚀 Quick Answer

When facing a Coding AI Comparison, the choice depends on whether you value raw intelligence or workflow connectivity.

- Claude Opus 4.7 is the superior choice for corner case handling, complex architectural reasoning, and final code quality when you can promise the data will stay on-prem or private.

- OpenAI Codex is the winner for speed-to-code and unstructured workflows that require web browsing, image generation, or OS-level control.

- The split: Anthropic wins on "Model Capability," OpenAI wins on "Ecosystem Infrastructure."

🎯 Introduction

April 16, 2026, was a pivot point. When Claude Opus 4.7 and OpenAI Codex dropped in the same 24 hours, the industry didn't just see two updates; it saw a declaration of war on different fronts. The Coding AI Comparison is no longer just about token prediction; it's about how models handle reality.

If you are trying to decide between a software assistant that "knows more" and one that can "do more," you are asking the wrong question. You need to understand the architecture behind each bet before you spend your dev budget.

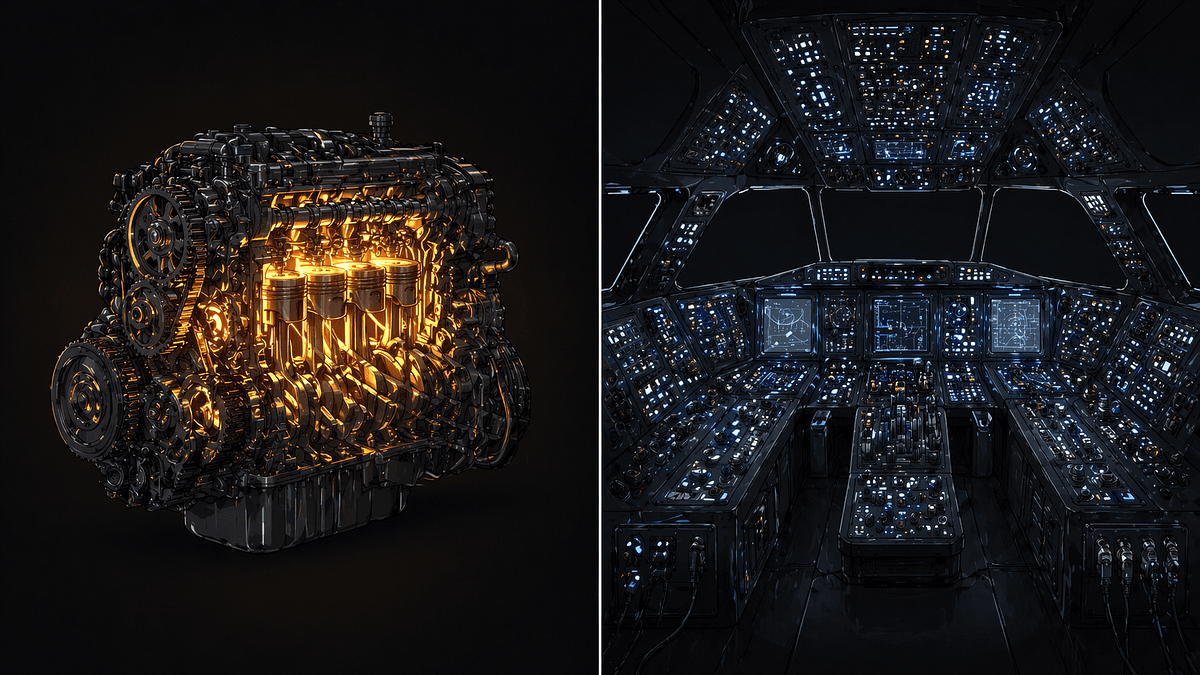

In this deep dive, we break down why Anthropic bet their future on a more sophisticated engine—Opus 4.7—while OpenAI bet on a multi-modal workstation—Codex.

🧠 The Core Philosophy: Smart Model vs. Wider Toolbelt

The fundamental difference in this Coding AI Comparison lies in the "Stack" each company has already built.

The "Anthropic Bet" (Claude Opus 4.7)

The philosophy is Silicon First. Anthropic believes that longer context windows, better reasoning chains, and "straight-line reasoning" (reducing hallucination fundamentally) will solve 90% of dev tasks.

- Improves: Final code review, complex logic debugging, and archaeology (reconstructing legacy codebases).

- Limitation: It is still a "thinking" model. It waits for you to prompt and refine.

The "OpenAI Bet" (Codex)

The philosophy is Agent First. OpenAI moved Codex from a strict chat interface to an "Orchestrator." It doesn't wait for prompts; it autonomously queries tools, switches contexts, and uses existing software.

- Improves: "Context switching" efficiency, building new UIs (using internal image generators), and interacting with local system APIs.

- Limitation: It requires tighter integration into your existing environment to be "safe."

🔍 Deep Dive: Architecture of the Beast

1. Reasoning vs. Execution

In a Coding AI Comparison, raw reasoning usually looks better on paper, but execution is what ships products.

- Opus 4.7 relies on a significantly expanded Mixture of Experts (MoE) architecture. It spends more tokens just thinking, then releases fewer tokens as code. This reduces extraneous iterations but requires the developer to verify basic syntax.

- Codex relies on a "Tool-Calling" loop. It runs a "Thinking" agent, then a "Tool-Using" agent. When you ask to "fix the bug," it loops 50 times calling

git logandcursor jump(simulated internal actions) autonomously. It trades calculation overhead for contextual memory.

2. Context boundaries

- Opus 4.7 pushes the hard limit. It can now effectively read the entire frontend and backend logic of a 2-million-line codebase in a single sitting.

- Codex connects to RAG (Retrieval-Augmented Generation) systems. Its "intelligence" is actually your knowledge base. If your RAG is bad, Codex is stupid.

3. Security & Cost Implications

This is where the user experience changes.

- Opus 4.7 pricing is flat-rate efficiency. You pay per token input/output. Expensive for massive prompts, cheap for complex reasoning.

- Codex pricing is usage-based. Paying for audits of the filesystem and API calls. For a heavy workflow, Codex becomes surprisingly expensive, often costing 5x more than Opus for the same task.

🏗️ System Design: How They Work in Production

If you are integrating one into your backend, here is the architectural difference.

The Claude Workflow

Client (VS Code) -> API Gateway -> Anthropic Guardrails -> Opus 4.7 Embedding Layer -> SQL/Code Output -> Response.

The Advantage: Simplicity. There is no orchestration middleware. The Downside: If the code output is syntax wrong, it fails. The model doesn't try to "apply" the fix; it just dumps the answer.

The Codex Workflow

Client -> OpenAI Control Plane -> Reasoning Loop -> Tool Registry (FileSys, Web, DevOps APIs) -> Response.

The Advantage: Resilience. If the code is wrong, Codex tries itself. If the file doesn't exist, it looks for it. The Downside: Latency. You are dealing with nested async loops. Waiting for Codex to "decide" to check git history might result in a 45-second wait.

⚔️ Detailed Comparison Table

| Feature | Claude Opus 4.7 | OpenAI Codex |

|---|---|---|

| Primary Strength | Code Quality & Debugging | Context Switching & Discovery |

| Architecture | Pure LLM (Mixture of Experts) | Autonomous Orchestrator + LLMs |

| Context Window | Massive (unlimited for practical coding) | Distributed (via RAG / Tools) |

| Tool Use | Limited (CLI mostly) | Extensive (90+ tools, CTR, Image Gen) |

| Output Style | Precise, direct "Copy-Paste" code | Experimental, iterative, "Fixes-it-myself" |

| Best For | Architectural refactoring | Building fresh features rapidly |

| Privacy | Higher (Data stays in Anthropic) | Variable (Depends on deployed OS tools) |

🧑💻 Practical Value: Which One Should YOU Use?

Scenario A: You are debugging a legacy monolith.

- Winner: Claude Opus 4.7.

- Why: Legacy code is messy. You need a model that can stare at broken logic and instantly find the missing variable. Codex will waste time browsing documentation trying to find formatting standards you don't care about.

Scenario B: You are building a startup MVP from scratch.

- Winner: OpenAI Codex.

- Why: You don't care about library obscure details. You want the bot to spin up the DB, draw the UI icons, and write the React components using random libraries that just happen to work. Speed wins here.

Scenario C: Enterprise Security Compliance.

- Winner: Claude Opus 4.7.

- Why: You cannot feed proprietary source code into a system that has "file system write access" even temporarily. The attack surface is too high.

In real-world usage, developers often build "Hybrid Agents."

- Use Claude Opus 4.7 to write the "Clean Code" logic (Requirement #1: Quality).

- Use OpenAI Codex to format the commit messages and version history (Requirement #2: Automation).

🔥 Contrarian Insight

Most articles will tell you to pick the newest model that scores higher on the LMSYS Chatbot Arena. That is a trap.

In a Coding AI Comparison, the "Arena Score" is a vanity metric based on chitchat. It measures how well the model talks. It does not measure how well the model survives a 3am production deployment where you copy-pasted a 400-line snippet.

The AI arms race isn't about who can write the best Shakespearean sonnet anymore. It's about who can handle the noise.

"Smart models fail when code breaks; Workflow models fail when the prompt was incomplete. In production, workflow is rare; broken code is the default state."

⚡ Key Takeaways

- Opus 4.7 is the "Senior Engineer in a Box." Smarter, but requires you to drive.

- Codex is the "Junior Dev on Stimulants." Fast, messy, but self-correcting.

- Cost isn't linear. Complex workflows using Codex tool-usage often exceed Opus costs by 500%.

- Don't judge by benchmarks; Judge by specificity (Specificity > Accuracy). Does it do exactly what I asked, or does it do a lot of things and get 1% right?

🔗 Related Topics

- How to architect an AI monitoring stack for 2026 (Technical Deep Dive)

- Privacy in the Age of Agentic AI: Risks Explained (Security Guide)

- Meta Llama 4 vs GPT-5: The Open Weights Battleground (Comparison)

❓ FAQ

Q: Is Opus 4.7 better than Codex for coding? A: For final code output, Opus 4.7 is objectively better because it maintains cohesive logic. Codex is better for generation (starting from nothing).

Q: Which one handles long context better? A: Claude Opus 4.7. It has a native 1M+ context window. Codex relies on retrieval; if your vector DB times out, Codex hallucinates.

Q: Did the "Same Day" release mean they were copycats? A: Unlikely. It highlights "Convergent Evolution." Both realized that simple chatbots were reaching the intelligence ceiling (unknown hump). The next step is both tool usage and intelligence.

Q: Should I pay for both? A: If you are solo, just Opus 4.7. You will be unhappier, but richer. If you are managing a team, codex might look impressive in demos, but Opus will keep your repo clean.

🎯 Conclusion

The headline over this Coding AI Comparison is clear: You are no longer choosing an AI; you are choosing an operating philosophy.

If you need high-fidelity results where quality is non-negotiable, Claude Opus 4.7 is your engine. If you need velocity and "smart automation" where error rate is acceptable, OpenAI Codex is your machine.

Which side of the bet are you on in 2026? Let me know in the comments.

Share This Bit