AIAI AgentsAI AssistantLLMDeep Learning

Deep Learning vs Machine Learning: The Definitive Guide to Artificial Neural Networks

🤖 Deep Learning vs Machine Learning: The Definitive Guide to Artificial Neural Networks

🚀 Quick Answer

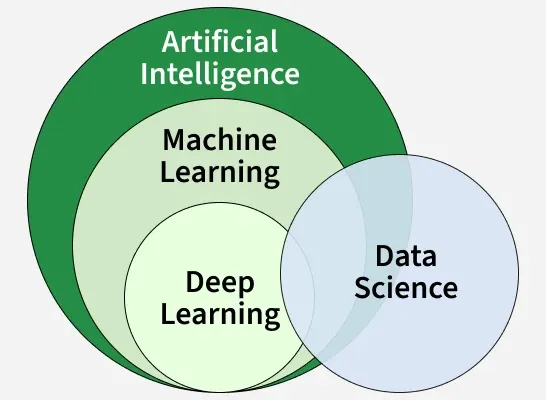

- Deep Learning (DL) is a specialized subset of Machine Learning (ML) that uses Artificial Neural Networks (ANNs) with many layers ("deep" architecture) to learn from vast amounts of data.

- It excels at unstructured data like images, audio, and text, whereas traditional ML struggles with raw sensory inputs without heavy manual feature engineering.

- Key limitations involve a massive need for computing power (GPUs) and black-box interpretability, which are major bottlenecks for enterprise deployment.

🎯 Introduction

Deep learning is revolutionizing how we process information, and understanding deep learning is crucial for modern developers. Unlike traditional machine learning, which relies on engineered features, deep learning utilizes complex structures known as artificial neural networks to mimic human cognition. If you are a developer looking to understand the engine behind ChatGPT or Tesla Autopilot, mastering deep learning concepts is no longer optional—it is essential for the current tech landscape.

🧠 Core Explanation

At a fundamental level, deep learning is about teaching computers to derive meaning from input.

Imagine the brain's neurons.

- Input Layer: Receives raw data (Pixel values from an image, word tokens from a sentence).

- Hidden Layers: These act as the "processor." In deep learning, we stack dozens or hundreds of these layers. Deeper layers identify simple patterns (edges, basic sounds) in lower layers and complex patterns (faces, concepts) in higher layers.

- Output Layer: Produces the final prediction or decision.

The magic happens through backpropagation, an algorithm that adjusts the weights inside these layers to minimize errors. This allows the model to "gaze" into the complexity of data.

🔥 Contrarian Insight

Deep Learning is often misunderstood as "Artificial Intelligence" itself. The truth is, AI is the umbrella term for machines simulating intelligence. Machine Learning is the umbrella for algorithms that learn from data. Deep Learning is just one specific path—a very expensive, data-hungry, and "black-box" path that currently dominates media headlines but fails to solve 90% of business problems where simple rules or basic ML rules work better.

If you are building an application today, do not default to Deep Learning. Default to logic. Only use Deep Learning when you are drowning in data and lack features.

🔍 Deep Dive: The Architecture of Intelligence

To understand deep learning from a developer's perspective, you must look past the hype and look at the math and architecture.

1. The "Deep" in Deep Learning

Why is it called "Deep"? It refers to the number of hidden layers between input and output. A shallow network might have 1 or 2 layers; a deep network might have 20 or 50. Why is the extra depth powerful? It allows the network to learn hierarchical features.

- Layer 1: Detects lines.

- Layer 5: Detects eyes.

- Layer 12: Detects a "face." Human brains learn the same way, layer by layer, abstracting detail as we go up.

2. The Trade-Offs (The "Black Box" Problem)

- Pros: Unrivaled accuracy on complex problems.

- Cons: Explainability. When a medical deep learning model rejects a patient, engineers often cannot explain why (the weights are too complex to trace easily).

🧑💻 Practical Value for Developers

Real-World Implementation:

Most developers on Slack complain about library overheads with deep learning frameworks (PyTorch/TF). Here is the workflow I use for deploying deep learning models:

- Data Ingestion: Don't use raw CSVs. Use specialized formats (Parquet/HDF5) tailored for tensors.

- Preprocessing: This is where 70% of your time goes. Data augmentation for images, tokenization for text.

- Training: Use a Deep Learning framework.

- Python Example (Pseudocode):

# Conceptual structure of a training loop for epoch in num_epochs: for batch in dataloader: prediction = model(batch.data) # The "Deep" math loss = calculate_loss(prediction, batch.label) loss.backward() # Backpropagation optimizer.step() # Update weights - Inference Optimization: In production, deep learning models need to be quantized (reduced precision) so they run on edge devices, not just giant cloud servers.

Mistakes to Avoid:

- Garbage In, Garbage Out: Deep Learning requires massive datasets. If your data is biased or messy, your model will be too.

- Overfitting: The model memorizes the training data but fails on new data. Always set aside a validation set.

⚔️ Comparison: Deep Learning vs. Machine Learning

When selecting a tool for your stack, make the right call.

| Feature | Deep Learning (DL) | Machine Learning (ML) |

|---|---|---|

| Data Requirement | Needs Tens of Thousands to Millions | Needs Hundreds to Thousands |

| Feature Engineering | Automatic (Self-learning) | Manual (Engineer creates features) |

| Hardware | Requires GPU/TPU (Expensive) | CPU (Relatively cheap) |

| Interpretability | Low ("Black Box") | High (Highly explainable) |

| Speed of Training | Slow | Fast |

| Best Use Case | Computer Vision, NLP (GenAI) | Spam Filters, Regression, Classification |

⚡ Key Takeaways

- Deep Learning is a subset of Machine Learning that uses Artificial Neural Networks with multiple hidden layers to automate feature extraction.

- It is the only viable technology for handling unstructured data like raw images and audio files.

- The biggest bottleneck is computational cost, requiring specialized hardware (GPUs) for training.

- Black Box interpretability remains a significant hurdle for regulated industries (Healthcare, Finance).

- Developer Tip: Use Deep Learning only when simple rules fail. Don't build a Neural Network for a simple If/Else logic.

🔗 Related Topics

- How Neural Networks Actually Process Image Data

- The Rise of Large Language Models (LLMs)

- Python Libraries for AI: PyTorch vs TensorFlow

- Understanding Gradient Descent Algorithm

- Edge AI: Running Models on Mobile Devices

🔮 Future Scope

The future isn't just about "bigger" deep learning; it's about efficiency.

- Lightweight Models: Researchers are moving toward "Petals" and distributed training to make deep learning more accessible.

- Neural Architectures for Everyone: Tools like OpenAI's API mean developers don't need to build the architecture; they just need to know how to prompt it. The barrier to entry for using deep learning is vanishing, but mastery of the underlying principles is rising.

❓ FAQ

1. Is Deep Learning the same as Neural Networks? No. Neural Networks are the architecture (the brain structure). Deep Learning is the application of these networks to learning tasks. Think of "Deep Learning" as the field and "Neural Networks" as the tool.

2. Why do we need more data for Deep Learning? The more layers and neurons a network has, the more parameters it needs to learn. It requires massive data to tune these parameters effectively without just memorizing the training set (overfitting).

3. Can I learn Deep Learning without Machine Learning knowledge? Technically yes, but it is highly discouraged. Deep Learning relies heavily on linear algebra, calculus, and probability concepts originally established in Machine Learning.

🎯 Conclusion

Deep learning is often hyped as the future of intelligence, but it is fundamentally just a powerful statistical tool. For the developer, its importance lies not in its "magic" but in its ability to solve problems—like identifying cancer from scans or translating languages—that were mathematically impossible for traditional code. As we move forward, focus on the data infrastructure first; the architecture is useless without it.

Ready to dip your toes in? Start with a computer vision project using a pre-trained ResNet model. It's the fastest way to grasp the power of deep learning.

Share This Bit