AILLMDeepseek

DeepSeek V4 Released Deep Dive (2026): Architecture, Benchmarks, and Pricing vs GPT-5.5

🚀 Quick Answer

- What is DeepSeek V4? A new open-weight MoE model released on April 24, 2026, introducing two variants: V4-Pro (1.6T total parameters) and V4-Flash (284B total parameters).

- The Efficiency Thesis: DeepSeek V4 reduces inference FLOPs to 27% of V3.2 and lowers KV cache occupancy to 10% of V3.2.

- Tech Highlights: Introduces Engram (static memory), Sparse Attention (DSA), and Manifold-Constrained Hyper-Connections (mHC) for training stability.

- Pricing: V4-Pro costs $1.74/M input and $3.48/M output, roughly one-eighth the cost of GPT-5.5.

- Best For: High-volume coding agents, repository-scale reasoning, and cost-sensitive long-context workloads.

🎯 Introduction

DeepSeek V4 was released as a public preview on April 24, 2026, exposing two hosted variants through its API and signaling that open weights would follow shortly. While many headlines focused on model sizes, the real story is operational: DeepSeek successfully trained a frontier-adjacent 1.6 trillion parameter model without NVIDIA Blackwell hardware, and it is pricing out the competition.

If you are building automated coding agents or processing massive codebases, the DeepSeek V4 release changes the economic feasibility of using frontier models for bulk compute. The 1.6T parameter DeepSeek V4-Pro variant doesn't just match GPT-5.5 on benchmarks; at 1/8th the output cost, it forces a rethink of how we architect large-scale LLM pipelines.

🧠 Core Explanation

The DeepSeek V4 release introduces a new paradigm in scaling laws. Instead of simply increasing parameter count, DeepSeek focused on the true cost of inference.

The Variants:

- V4-Pro: The flagship model. 1.6T total parameters, 49B active per token. Designed for deep reasoning on complex repos.

- V4-Flash: The efficiency champion. 284B total parameters, 13B active. Optimized for speed and lower cost, with surprising capability retention.

The Efficiency Claims: DeepSeek’s headline efficiency claim is that V4-Pro reduces single-token inference FLOPs to 27% of V3.2 and KV cache occupancy to 10% of V3.2 at the 1M-token setting. That figure is the engineering thesis of the entire release.

🔥 Contrarian Insight

"Don't use V4 as your LLM of record; use it as your LLM of volume."

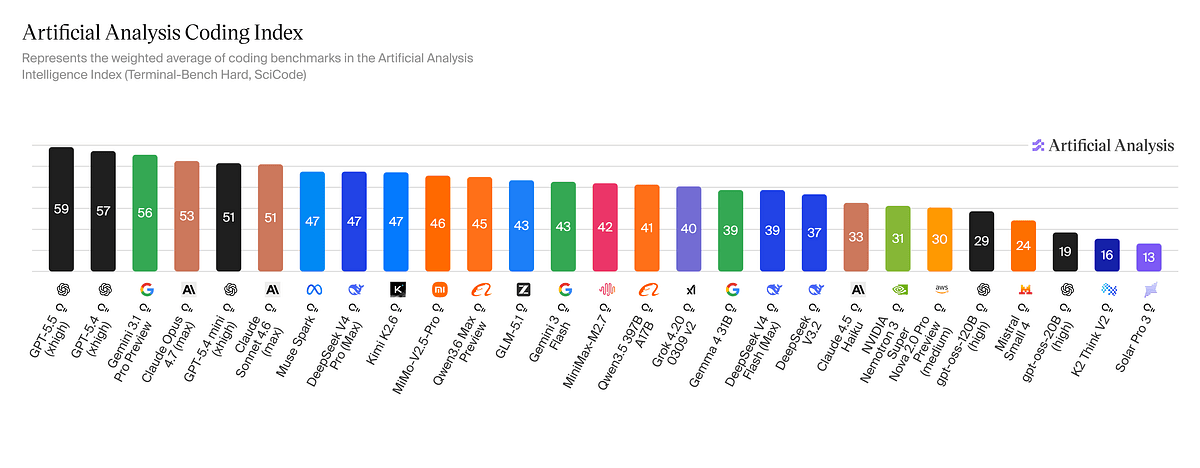

Most developer teams trying to adopt V4 will fail if they try to route everything through it. V4 is not the strongest reasoning model; it has massive gaps against GPT-5.5 on terminal tasks and Against Opus 4.7 on internal QA. However, V4 is arguably the best "worker bee" model for repetitive coding tasks, refactoring, and synthetic data generation. The smart stack isn't replacing OpenAI with DeepSeek; it's adding DeepSeek to middle-truncate the token usage of your GPT-5.5 orchestrator.

🔍 Deep Dive: Architecture & Engineering

DeepSeek V4’s architectural story relies on three independent, high-leverage pieces: an attention rewrite, a memory primitive, and a stabilization scheme.

1. Attention Rewrite (DSA)

DeepSeek Sparse Attention (DSA) is the primary computational saver.

- Mechanism: It selects only top-k compressed tokens for full attention.

- Local Context: A sliding window of 128 uncompressed tokens ensures local nuance isn't lost.

- Compressed Attention: Uses "Lightning Indexer" running in FP8 with ReLU-activated scoring to drive selection.

- Speedup: Reduces attention complexity from quadratic to roughly O(Lk) where k is much smaller than L.

- KV Cache: Uses multi-stride compression (c4a and c128a), meaning you store less historical context in VRAM.

2. Engram (Static Memory Primitive)

This is arguably the most interesting structural change.

- Concept: A static memory table handles knowledge lookup outside standard attention.

- Mechanism: Multi-head hashing over N-grams maps directly to embedding tables (constant-time retrieval).

- Sparsity Allocation Law: DeepSeek found the optimal allocation is roughly 20–25% of sparse parameters in Engram tables.

- Zero-Shot Impact: Adding this increased Multi-Query NIAH accuracy from 84.2% to 97.0%.

- Operational Logic: Once trained, Engram tables are frozen. They can be paged out of GPU VRAM into host memory or NVMe, offering under 3% overhead and drastically lowering VRAM requirements for inference.

3. Manifold-Constrained Hyper-Connections (mHC)

- Problem: Standard residual connections at this depth compound activation variance, causing training instability.

- Solution: Uses Birkhoff polytope projections to bound signal amplification below 2x.

- Optimizer: Training uses Muon optimizer, matching GLM-5.1 and Kimi K2.6 for stability.

🏗️ System Design: Production Deploying V4

To deploy DeepSeek V4 effectively in production, you must adapt your pipeline infrastructure to handle FP8 precision and explicit attention rewriting.

The Data Flow

- Tokenizer: V4 expects UTF-8 tokens. It introduces a new XML-style tool-call format (using a

DSMLspecial token) to reduce escaping failures in long agent trajectories. - Inference Engine: Ensure your custom deployment supports FP8 mixed precision. The "Lightning Indexer" for Sparse Attention requires a separate CUDA stream running parallel to main attention computation.

- Memory Management: If running V4-Pro, utilize the Engram paging logic. Offload 100B parameters to host memory to reduce the base VRAM requirement.

Integration Pattern (Agent Stack)

graph TD

A[User Query] --> B(GPT-5.5 / Opus Orchestrator)

B -- Transforms & Quality Control --> C{Cost Threshold}

C -- High Complexity --> B

C -- Standard Coding / Refactoring --> D[DeepSeek V4-Pro / V4-Flash]

D --> E[Worker Agent]

E --> F[Codebase Context 1M Token Window]

F --> G[Output]

G --> B

B --> H[Final Response]

🧑💻 Practical Value

How to Verify the Benchmarks Yourself

You can test DeepSeek V4 right now via their API.

- Output Limit: Be aware of the 384,000-token maximum output. Early adopters hitting this limit with

Think Maxmode without enough context causes timeouts. Default to Think High for easily verifiable tasks like refactoring. - Prompting for Code:

Create a repository and ask for a refactor. Use the XML format to ensure tool-calls parse correctly:

<dsml_code_task> location: src/utils/parser.go instruction: Optimize for regex performance </dsml_code_task> - Testing "Engram" capabilities: Feed the model a large knowledge base (e.g., a company internal document) and then ask questions referencing specific obscure details from that document after many intervening prompts. You should see a massive jump in accuracy compared to vanilla LLMs.

The "Flash" Trap

Do not default V4-Flash to "Think Max" immediately. While V4-Flash-Max reaches reasoning performance comparable to Pro on many benchmarks (like SWE-Bench Verified), it degrades on Toolathlon (51.8 vs Pro's 51.8 - wait, check text: text says 51.8 vs 54.6 & 55.0) and long-horizon agent work where memory depth matters. Reserve Flash for direct HTTP requests or simple class generation; use Pro for the actual heavy lifting.

⚔️ Comparison: DeepSeek V4 vs. The Frontier (2026)

When evaluating where to put your compute budget, here is how V4 stacks up against the top competitors.

| Feature | DeepSeek V4-Pro | GPT-5.5 Thinking | Gemini 3.1 Pro | Anthropic Opus 4.7 |

|---|---|---|---|---|

| Parameters | 1.6T (49B Alt) | Unknown (Proprietary) | Unknown | Unknown |

| Context | 1M Tokens | Likely >1M | Unknown | Likely >1M |

| Cost (Out) | $3.48 / M | $30 / M | ~$22 - $25 / M | ~$25 / M |

| SWE-Bench Verified | 80.6 (Tie) | 73.1 | 80.6 | 80.8 |

| Terminal-Bench 2.0 | 67.9 | 82.7 | 68.5 | 65.4 |

| GPQA Diamond | 90.1 | N/A | 94.3 | N/A |

| Pricing Verdict | Best Value | Premium | Mid-Tier | Niche |

Deployment Strategy:

- Use DeepSeek V4 for: Bulk coding, long-context file rewriting, agent orchestration stacks.

- Use GPT-5.5 for: Complex terminal navigation where error rate is critical, and MVP generation.

- Use Lauden for: High-value reasoning and reasoning-heavy benchmarks.

⚡ Key Takeaways

- The "V4" Architecture: Combines Sparse Attention (DSA), Engram (static memory), and mHC (stability) to do more with less.

- Cost Disruption: At 1/8th the price of GPT-5.5, V4 makes long-horizon automation economically viable.

- The Open Move: DeepSeek signaled open weights are "following shortly," bringing frontier-class performance to open-source ecosystems.

- Context Window: A 1M-token context window is native, and the Engram module supports paging to additional storage.

- Not a Singularity: V4 is the best open-weight coding model, but it is not the "best language model."

🔗 Related Topics

- Why 1M Context Windows Break Development Pipelines // Discussion on why context isn't everything.

- Guide to Implementing FP8 KV Cache in Production // Technical deep dive into the engine upgrades.

- The Rise of MoE Architectures in 2026 // Evolution from single models to specialized experts.

- Building Agent Orchestration Stacks with Llama 405B // Architecture for coordinating multiple powerful models.

🔮 Future Scope

The most critical unknown is multimodality. While the core V4 is text-only, rumors indicate a DeepSeek vision-extension or integration with "DeepSeek OCRv3" is inbound. Once V4 can read code diffs and visual layouts, its advantage over GPT-5.5 will become overwhelming for UI/UX automation tasks.

❓ FAQ

Q: Is DeepSeek V4 better than Claude Opus 4.7? A: It depends on the task. V4 is superior for bulk coding and cost-efficiency. Opus 4.7 still leads on reasoning benchmarks and terminal navigation (lower error rate). V4 is better as the "worker," Opus as the "manager."

Q: Can I run DeepSeek V4 locally? A: Yes, now that open weights are releasing. However, the 1.6T parameter model requires significant VRAM. The Engram paging feature helps, but you will likely need 80GB+ of VRAM to run it quantized effectively locally.

Q: What is the difference between V4-Pro and V4-Flash? A: V4-Pro is for deep reasoning and complex tasks (1.6T parameters). V4-Flash is for high-speed, cost-sensitive tasks where extreme depth isn't needed (284B parameters). Flash often performs surprisingly well on coding tasks but falls short on Agent workflows that require long-duration context.

Q: How reliable are V4's benchmarks? A: Macaron’s survey labels V4 numbers as "internal claim only." Until third-party replication (like HumanEval verification) lands, assume a 1-2% margin of error on the reported scores compared to GPT-5.5.

Q: Is DeepSeek V4 a "Frontier" model? A: In terms of parameter size and capability, yes. However, performance per dollar makes it the "price frontier," while models like GPT-5.5 remain the "quality frontier."

🎯 Conclusion

DeepSeek V4 isn't just another model release; it is a stress test of the current AI pricing models. By decoupling active parameters (13B/49B) from static parameters (Engram tables) and optimizing attention into the red (27% FLOPs), DeepSeek has built a machine that punches far above its weight class.

For developers watching their cloud bills, V4-Flash is a no-brainer for basic generation. For engineering teams, V4-Pro is the new standard for repository-scale LLM automation. The era of few expensive giants and many small models is over; we are entering an era of abundant, intelligent compute that you can afford to waste.

Share This Bit