AILLM

Local LLMs for Graph RAG Extraction: The Real Bottleneck Nobody Talks About

🧠 Local LLMs for Graph RAG Extraction: What Actually Works

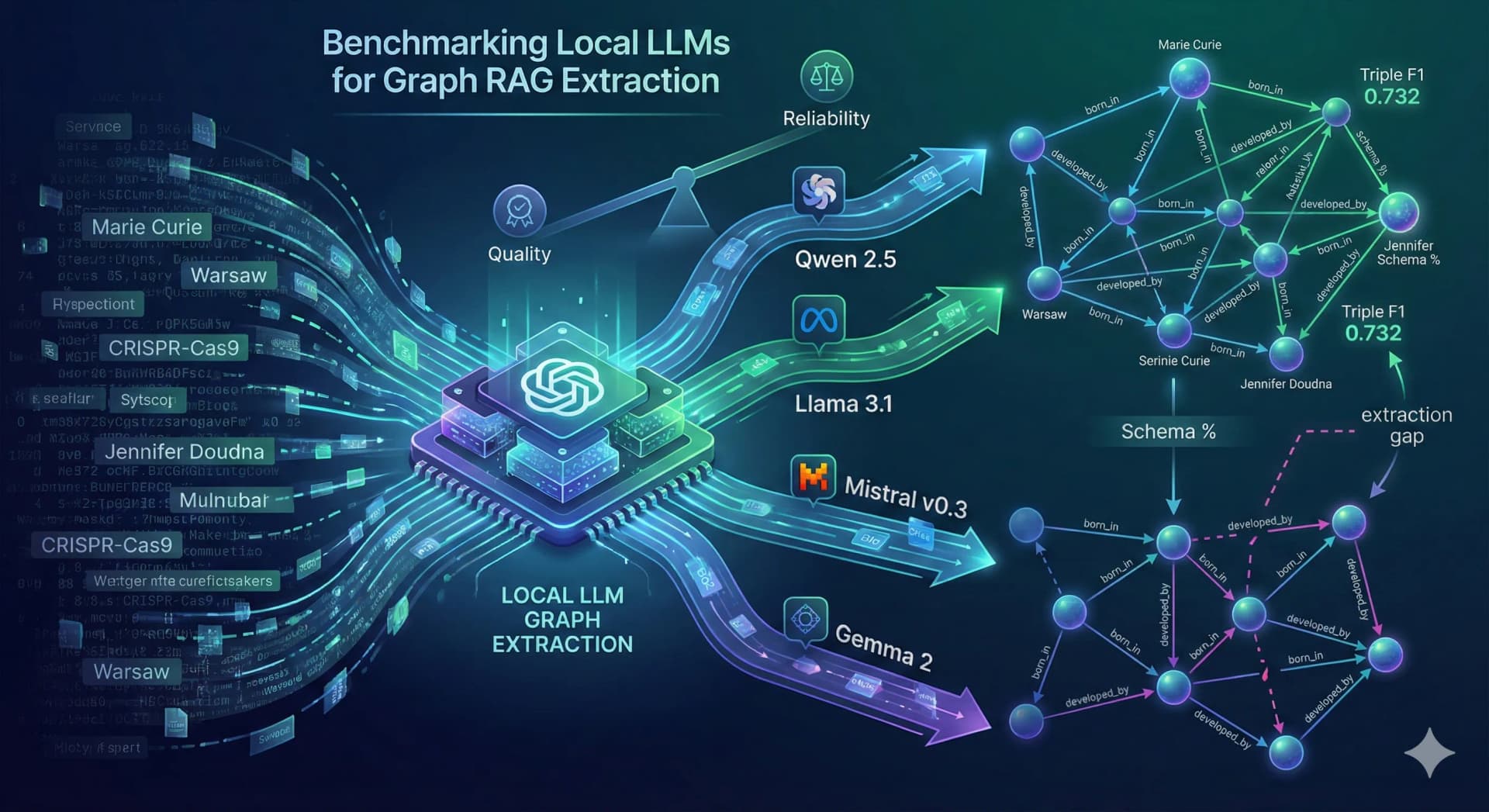

TL;DR: Graph RAG promises smarter retrieval through structured knowledge graphs—but the real bottleneck is extraction. Local LLMs struggle more with relation extraction than entity recognition, forcing trade-offs between accuracy, reliability, and latency. This guide breaks down what actually works in production.

🎯 Dynamic Intro

Graph RAG is having its moment—and for good reason. In a world drowning in unstructured data, the ability to convert raw text into interconnected knowledge graphs feels like upgrading from a flashlight to a GPS. Instead of retrieving documents based on keyword overlap, Graph RAG enables systems to understand relationships—who did what, where, and how everything connects.

But here’s the uncomfortable truth: most Graph RAG pipelines fail silently before they even begin.

The failure doesn’t happen during retrieval. It happens earlier—in extraction. Before you can query a knowledge graph, you need to build one. And building one requires transforming messy, ambiguous human language into structured triples like (entity, relation, entity). That’s where things break down, especially when you're relying on local LLMs instead of API-based giants.

In this deep dive, we’ll explore what actually works when using local LLMs for Graph RAG extraction. You’ll learn where models succeed, where they fail, and how to design systems that are robust enough for production.

💡 The "Why Now"

The urgency around local LLMs isn’t theoretical—it’s operational. Enterprises across healthcare, finance, and legal sectors are rapidly adopting AI, but with strict constraints. Data sovereignty laws, compliance frameworks like HIPAA and GDPR, and internal governance policies make sending sensitive data to external APIs a non-starter.

This is where local LLMs step in.

Running models like LLaMA 3.1, Mistral 7B, Qwen 2.5, and Gemma 2 on-premise offers:

- 🔐 Data privacy: No external data transfer

- ⚡ Lower latency: Especially for high-throughput pipelines

- 💰 Cost control: No per-token API billing

But here's the catch—these smaller models (7B–9B parameters) operate under tighter cognitive constraints. Asking them to perform Graph RAG extraction is like asking a junior analyst to simultaneously:

- Identify entities

- Classify entity types

- Infer relationships

- Normalize predicates

- Output valid JSON

That’s not one task. That’s five tightly coupled tasks.

And the industry is just beginning to realize that relation extraction—not retrieval—is the real scaling bottleneck.

🏗️ Deep Technical Dive

🔬 Understanding the Extraction Pipeline

At its core, Graph RAG extraction transforms unstructured text into structured triples:

Input: "Marie Curie was born in Warsaw."

Output: (Marie Curie, born_in, Warsaw)

But in real-world documents, sentences are rarely this clean. You encounter:

- Nested clauses

- Ambiguous references

- Multiple entities per sentence

- Temporal qualifiers

This creates a combinatorial explosion of possible interpretations.

⚙️ Model Benchmark Architecture

The benchmark setup evaluated four local models across three prompting strategies:

Models:

- Qwen 2.5 7B

- LLaMA 3.1 8B

- Mistral 7B

- Gemma 2 9B

Prompting Strategies:

- Naive prompting

- Schema-in-prompt

- Few-shot prompting

Each model was tested on passages of increasing complexity—from simple biographies to dense scientific text.

🧪 Key Finding #1: Entity Recognition Is Solved

Across all models, entity extraction performed surprisingly well:

- Entity F1 scores ranged from 0.78 to 0.91

- Models consistently identified key entities even in complex passages

This suggests that modern LLMs—even smaller ones—have strong semantic parsing capabilities.

But this success is deceptive.

🧠 Key Finding #2: Relation Extraction Is the Bottleneck

Triple extraction tells a different story:

- Best Triple F1: 0.732 (LLaMA 3.1 + few-shot)

- Most models: 0.52–0.60 range

Why the drop?

Because relation extraction requires:

- Semantic understanding (what relationship exists)

- Structural discipline (how to express it cleanly)

- Consistency (standardized predicates)

Models often fail by:

- Combining multiple relationships into one

- Using inconsistent predicate naming

- Producing invalid JSON

🔄 Key Finding #3: Prompting Trade-offs

Prompting strategy dramatically impacts performance:

| Strategy | Quality | Reliability | Latency |

|---|---|---|---|

| Naive | Low | Medium | Fast |

| Schema-in-prompt | Medium | High | Medium |

| Few-shot | High | Low | Slow |

Few-shot prompting improves understanding—but increases cognitive load, leading to malformed outputs.

🧩 Architectural Implications

To build production-ready systems, you need more than just a model:

- Validation Layer: Ensure JSON schema correctness

- Retry Logic: Fallback to simpler prompts on failure

- Canonicalization Engine: Normalize predicates

- Confidence Scoring: Filter low-quality triples

Think of extraction as a distributed system—not a single inference call.

📊 Real-World Applications & Case Studies

Companies building knowledge-intensive systems are already facing these challenges.

In healthcare, organizations are using Graph RAG to map relationships between drugs, conditions, and clinical outcomes. Extraction errors here aren’t just inconvenient—they can impact decision-making pipelines. Local models are preferred due to compliance, but require heavy post-processing layers.

In legal tech, firms are extracting relationships between cases, statutes, and precedents. Dense legal language amplifies the extraction problem—models often miss implicit relationships or misinterpret legal phrasing.

In enterprise search platforms, companies are building internal knowledge graphs to connect documents, teams, and workflows. Here, latency and cost constraints make local models attractive—but only if extraction pipelines are reliable.

Even in tech companies, Graph RAG is being used to map codebases—linking functions, services, and dependencies. This requires high precision, as incorrect relationships can break developer workflows.

⚡ Performance, Trade-offs & Best Practices

🏆 Best Practices

- ⚡ Use schema-in-prompt as default for stability

- 🧠 Apply few-shot selectively for high-value documents

- 🔄 Implement retry strategies with fallback prompts

- 🧩 Normalize predicates using synonym mapping

- 📊 Track both entity and triple F1 separately

- 🛡️ Validate JSON outputs before ingestion

Expert Tip: Treat extraction like a probabilistic system, not a deterministic one. Build pipelines that assume failure—and recover gracefully.

In practice, the best systems don’t rely on a single model or prompt. They orchestrate multiple strategies, balancing quality and reliability dynamically.

The biggest mistake teams make is optimizing for raw accuracy instead of system robustness.

🔑 Key Takeaways

- 🧠 Extraction—not retrieval—is the real Graph RAG bottleneck

- 📊 Entity recognition is largely solved—but relations are not

- ⚖️ There is no “best model”—only trade-offs

- 🔄 Few-shot improves quality but reduces reliability

- ⚙️ Schema prompting offers the best stability baseline

- 🧩 Evaluation logic can skew perceived performance

- 🚀 Production systems require orchestration, not just inference

- 🔐 Local models are essential for enterprise adoption

🚀 Future Outlook

The next 12–24 months will likely see rapid innovation in structured extraction.

We’re already seeing early work in constrained decoding, where models are forced to generate valid JSON at the token level. This could eliminate one of the biggest reliability issues.

Another promising direction is task-specific fine-tuning. Instead of using general-purpose instruction models, we’ll see specialized extraction models trained on structured datasets.

Finally, hybrid systems will emerge—combining symbolic methods with neural models. Think rule-based systems guiding LLM outputs, reducing ambiguity and enforcing consistency.

Graph RAG isn’t going away. But its success depends on solving extraction—not retrieval.

❓ FAQ

🔍 What is Graph RAG extraction?

Graph RAG extraction is the process of converting unstructured text into structured knowledge graph triples (subject, predicate, object). It enables relationship-aware retrieval.

⚙️ Why are local LLMs harder to use for extraction?

Local LLMs have fewer parameters and less reasoning capacity than API-based models. They struggle with multi-step tasks like relation extraction and structured output formatting.

🧪 Which prompting strategy works best?

Schema-in-prompt offers the best balance of reliability and performance. Few-shot improves accuracy but introduces instability.

📊 How do you evaluate extraction quality?

Using metrics like Entity F1 and Triple F1, often with fuzzy matching and synonym normalization to account for semantic equivalence.

🔐 Why not just use GPT-4?

In many enterprise environments, data cannot leave internal infrastructure due to compliance, privacy, or cost constraints—making local models necessary.

🎬 Conclusion

Graph RAG is often framed as a retrieval problem—but in reality, it’s an extraction problem wearing a retrieval hat.

Local LLMs are powerful enough to build production-grade knowledge graphs—but only if you respect their limitations. The winning strategy isn’t picking the “best model.” It’s designing resilient systems that balance accuracy, reliability, and cost.

If you're building the next generation of AI systems, start by fixing your extraction pipeline.

Because in Graph RAG, what you extract determines everything you can retrieve.

Explore more deep technical breakdowns like this on BitAI—and stay ahead of the curve.

Share This Bit