OpenClaudeAIAI AssistantAI Agents

OpenClaude Transforms Coding Workflows: The Ultimate Unified Agent for Modern Teams

🚀 Quick Answer

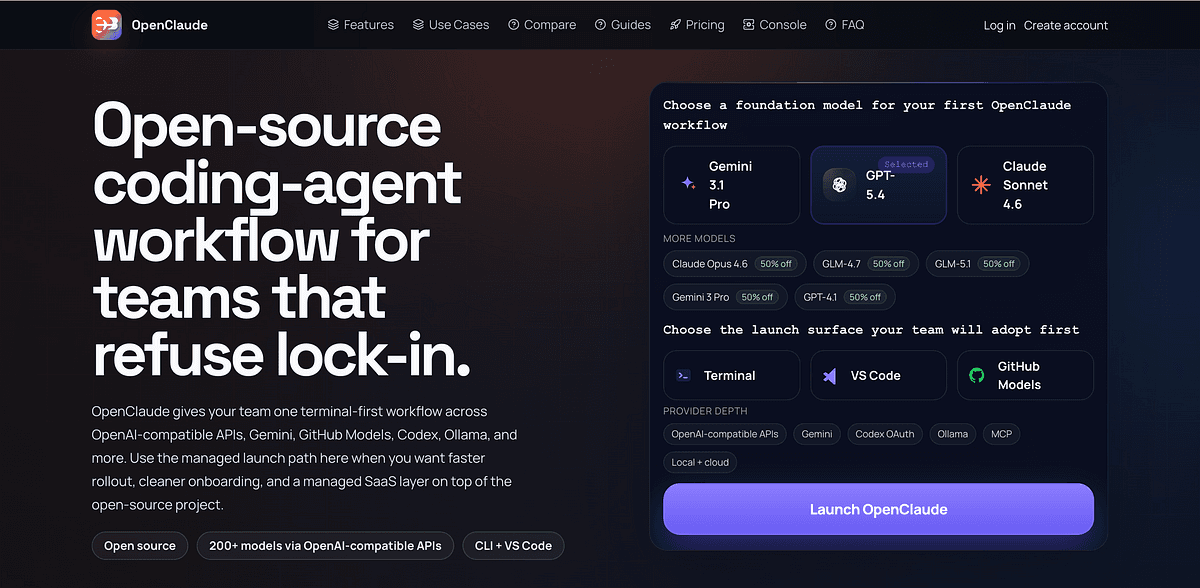

- What is OpenClaude? An open-source platform designed to unify different AI coding models into a single standardized interface.

- Key Integration: Seamlessly connects OpenAI-compatible APIs, Gemini, GitHub Models, Codex, and Ollama.

- Use Case: Solves "API silos" by allowing developers to switch between models without changing code logic.

- Environment: Built specifically for modern teams using VS Code and MCP protocols.

🎯 Introduction

In today's developer landscape, fragmentation is the enemy of speed. We often found ourselves struggling to maintain the OpenClaude coding workflows required to drive innovation, only to hit walls with rigid API constraints. If you are attempting to stitch together a sophisticated coding pipeline using separate tools for generation, debugging, and context management, the overhead is massive. OpenClaude transforms coding workflows by eliminating that friction, acting as a unified coding agent that harmonizes diverse environments into one cohesive system.

Essential for modern productivity, this solution allows teams to launch and operate projects faster, focusing on building software rather than managing middleware.

🧠 Core Explanation

Most AI coding tools are "black boxes" tied to a single provider. This creates a proprietary lock-in where you cannot switch models based on context without rewriting your repository logic. OpenClaude works by abstracting these providers.

It acts as a hybrid workflow engine. Instead of hardcoding model="gpt-4", your code interacts with a standardized layer that intelligently routes requests to the most appropriate backend (e.g., Hugging Face's Codex for trivia, Gemini for context expansion, or Ollama for private offline tasks). This结构性改变 maters because it shifts the focus from the AI provider to the task, not the tool.

🔥 Contrarian Insight

"The single biggest bottleneck in modern development isn't the model's intelligence—it’s your ability to swap models faster than a hot swap in a data center."

Stop swallowing the sales pitch of one provider. The smartest engineers don't just pick the "best" model; they pick the right model for the specific function and swap instantly. OpenClaude forces you to recognize that the monolithic "ChatGPT" workflow is dead; Distributed intelligence is the future, and an open gateway is the only way to maintain architectural integrity.

🔍 Deep Dive / Details

OpenClaude operates on a Model-Routing Architecture.

The Problem of Context Switching

In a traditional workflow, you need a specialist for syntax (Codex), a generalist for reasoning (GPT-4), and a local tester (Ollama). Switching APIs manually introduces latency and cognitive overhead. OpenClaude solves this by aggregating these endpoints via the MCP (Model Context Protocol) standard.

The Workflow Flow

- Input Acquisition: The agent receives a prompt (via VS Code or CLI).

- Capability Analysis: The router analyzes the intent (e.g., "Refactor this func" vs. "Explain this logic").

- Provider Selection: Depending on the analysis, it routes:

- To OpenAI-Compatible APIs for high-stakes refactoring.

- To Ollama for fast, private summarization.

- To Gemini for multimodal context (handling images/plots).

- Response Aggregation: The output is standardized and fed back into the VS Code IDE or the internal system responsible for the PR/Commit.

Why This Matters for Teams

Standardization prevents "vendor lock-in." If a model goes down, your pipeline continues via a fallback provider defined in the configuration.

🏗️ System Design / Architecture (Developer Perspective)

If you are designing a system around this workflow, consider the following architectural layers:

1. The Gateway Layer

This is the entry point (OpenClaude interface). It handles authentication tokens (OpenAI keys, GitHub personal access tokens) securely and routes them asymptotically.

2. The Router Protocol

A lightweight JSON-RPC bridge that exists between the local dev environment and the cloud providers. This isn't just a simple HTTP forward; it handles:

- Temperature & Top-P Tuning: Dynamically adjusting generation parameters per provider (e.g., Ollama runs low-temperature, GPT runs medium).

- Caching Strategy: Implementing a Redis layer on top. If a model has seen this code snippet before, serve it from cache before hitting the API. This saves cost and latency.

3. The CLI/IDE Bridge

Using MSCP (Model Context Protocol), OpenClaude bridges the gap between the AI and the filesystem. It provides "File System Tools" to the agent regardless of which underlying API is processing the request.

🧑💻 Practical Value

Implementation Scenario

Let's look at how a team implements this to replace a broken spaghetti code of import functions.

Setup:

- Install the OpenClaude VS Code extension.

- Define your

config.jsonto point to:local_host: Ollama (mistral7b)cloud_primary: OpenAI (GPT-4o)cloud_fallback: Anthropic/Claude link

Game Day: Developer writes a complex Python script. They hit "Rewrite with Async IO".

- Step 1: OpenClaude detects

asynciois not imported. - Step 2: It uses Ollama (fast, local) to verify syntax if possible.

- Step 3: It sends the refactored logic to GPT-4o for the algorithmic optimization.

- Step 4: Changes are written directly to VS Code tabs instantly.

Mistake to Avoid

Do not over-swallow context length limits. Continuously monitor your token usage. While OpenClaude helps manage the switch, the token costs can skyrocket if the agent does not read your official provider rate-limits and automatically truncate historical context for studio keys.

⚔️ Comparison Section

| Feature | OpenClaude Workflow | Generic LangChain | Native Vercel AI |

|---|---|---|---|

| Architecture | Unified Agent Router | Modular Components | Library-based |

| Model Agnostic | Yes (Auto-switches Ollama/Gemini) | Yes (Manual config) | Yes (Generic) |

| Ease of Use | High (VS Code Native) | Medium (Dev complexity) | Medium |

| Best For | Teams needing multi-provider agility | Custom complex RAG pipelines | MERN Stack apps |

| Cost | Variable (Best use of free tiers) | Chevron | Chevron |

Verdict: OpenClaude wins on workflow continuity. If you are a solo dev building a standard app, LangChain is fine. If you are a team managing legacy repos and diverse AI models, OpenClaude is the production-ready fix.

⚡ Key Takeaways

- Fragmentation kills productivity: Stop configuring separate tools for every task in your pipeline.

- Agility is key: The ability to swap between Ollama (local) and GPT-4 (cloud) dynamically is a massive workflow advantage.

- MCP is the connector: The Model Context Protocol is what allows these disparate services to "talk" to each other in a human-readable format.

- Context Management: Running a smart router is only half the battle; you must manage token limits to prevent bill shock.

🔗 Related Topics

- Understanding MCP: The New OpenAI Governance Protocol

- Top 5 VS Code Extensions for AI-Assisted SRE in 2024

- Local LLMs vs Cloud APIs: When to Use Ollama

🔮 Future Scope

We expect OpenClaude to evolve by integrating Memory Layers that persist across sessions. Imagine your agent "remembering" your team's architectural decisions from last year and applying them to new PRs automatically. The next phase is Self-Healing Pipelines, where the workflow detects a broken integration and tries a fallback provider before alerting the developer.

❓ FAQ

Q: Is OpenClaude open source? A: Yes, primarily designed as an open-source workflow artifact to ensure transparency and vendor independence.

Q: Does it work offline? A: Yes, because it supports Ollama and similar local LLMs, you can perform coding tasks without internet access as long as your local models are downloaded.

Q: Can I use it with my existing GitHub repositories? A: Absolutely. The integration is designed to hook directly into your existing git flow and VS Code environment.

Q: What makes it better than just using an API wrapper? A: It focuses on the workflow and selection logic. It doesn't just call an API; it decides which API to call based on the context of the code being written.

Q: Is there a cost involved in setting it up? A: The tool infrastructure might be free, but you are still paying for the external AI providers (OpenAI/Gemini) if you use their cloud endpoints.

🎯 Conclusion

OpenClaude transforms coding workflows not just by providing access to better APIs, but by solving the cognitive debt of context switching. By offering a unified coding agent approach that respects the unique strengths of OpenAI, Gemini, and local models, it empowers teams to ship faster in a multi-cloud AI landscape.

If you are tired of your tooling dictating your development speed, it is time to switch to a workflow that adapts to you. Explore the platform at https://www.openclaude.clauxel.com/ and see the difference a unified workflow makes.

Share This Bit