AIAI Agents

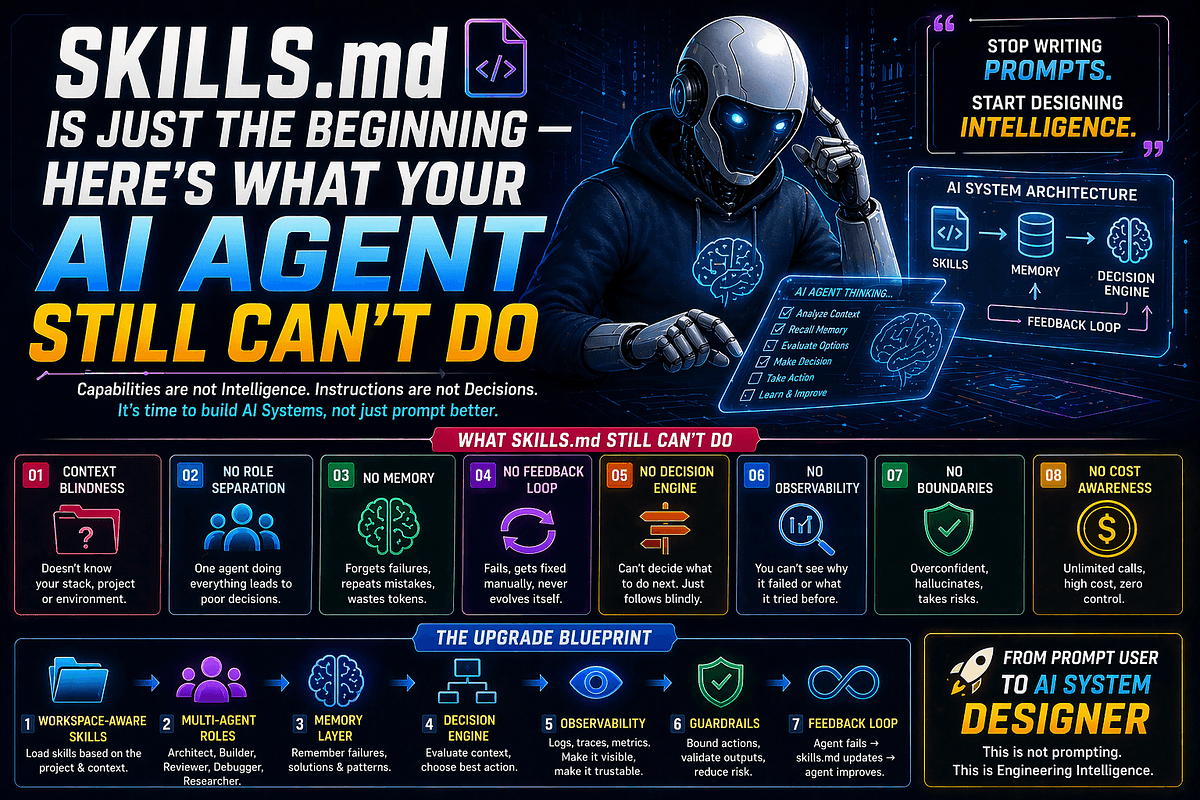

Skills.md Is Just the Beginning: What Your AI Agent Still Can't Do (Deep Tech Guide)

- Static vs. Dynamic:

skills.mddefines capabilities (static state), but it lacks context management and memory (dynamic behavior). - No Self-Correction: A basic

skills.mdagent executes code or prompts but cannot natively verify results through a "reflection loop" or error analysis. - Context Awareness Gap:

skills.mdtells the agent how to act, but it doesn't know what it already knows, leading to redundant context processing and wasted token budget. - Orchestration Lag:

skills.mdrelies on static instruction sets, but real-world agents require orchestration (deciding which skills to use based on dynamic outcomes). - Hidden Friction: Building just a

skills.mdfile creates a "puppet" — a bot that follows orders without significant autonomy or system-level adaptation.

🎯 Introduction

You just finished defining your skills.md file. The files are organized. The descriptions are clear. You feel ready.

But if you think skills.md is the secret sauce for intelligent automation, you are missing the bigger architectural picture. Many developers equate a well-written skills.md with a smart agent, but skills.md is merely the catalog. It describes the tools, not the thinker.

Skills ≠ Intelligence.

By relying solely on skills.md, you are building a rigid system that cannot adapt. To build an AI agent that survives in production, you must understand the gap between capability and autonomy. In this guide, we will break down why skills.md is just the beginning and what your architecture must do next to handle real-world complexity.

🧠 Core Explanation: The "What" vs. The "How"

In software engineering, we have a difference between a Command List and a Control System.

skills.md= The Command List: This tells the Large Language Model (LLM) what functions and APIs exist (e.g., "You CAN usedelete_user"). This is reactive. It waits for a request and looks for a matching skill.- Missing = The Control System: Real intelligence requires predicting consequences, managing long-term memory, and handling failures.

If your agent reads skills.md and just executes the first match, it succumbs to "reasoning collapse"—it gets stuck in loops or hallucinates because it has no memory of previous attempts.

🔥 Contrarian Insight

"The most dangerous misconception in agentic architecture today is that adding a comprehensive skills.md makes an agent safer."

In reality, exhaustive skills.md files often lead to the "Curse of Choice." When an agent sees too many defined capabilities, it creates a chaos entropy where it picks the wrong tool or overcomplicates simple tasks. A truly intelligent agent relies on sparse skills and rich orchestration, not thousands of file definitions.

🔍 Deep Dive: The skills.md Architecture Gap

To understand what agents can't do with just a skills.md file, we have to look at the standard RAG (Retrieval-Augmented Generation) architecture and see where it breaks when applied to agents.

1. The Static Index Problem

Most skills.md implementations convert markdown files into vectors (embeddings).

- How it works: The agent searches for the semantic meaning of a user query in

skills.mdto find a trigger. - The Limitation: This is offline cognition. The agent cannot "argue" with the content of

skills.mdto refine its understanding. It either accepts the skill description or hallucinates a new one. It lacks active inference.

2. Feedback Loops are Absent

An AI agent executing a skills.md skill typically runs like this: Query -> Skill Selected -> Action Executed -> Result Returned.

If the result is an error, the static skills.md pipeline usually terminates or asks the user for clarification.

- The Missing Piece: The agent needs a Meta-Skill. A function that says:

- Success? Verify against a schema.

- Error? Check the reasoning.

- Fallback? Search

skills.mdfor an alternative approach.skills.mdalone cannot handle this logic; it requires runtime decision-making.

3. Memory Fragmentation

skills.md provides general knowledge (skills). It does not provide situational knowledge (memory).

- If an agent deletes a user in

skills.md, butskills.mddoesn't remember the user deleted it because it didn't store that state, you have a fragile state machine. - A

skills.mdagent is stateless by design; a production agent must be stateful.

🏗️ System Design: Building What Comes After skills.md

To fix these gaps, you need to extend the architecture. Here is the flow from your current skills.md setup to a production-grade Agent.

The Flow

- Perception (Input): User asks for a task.

- Intent Analysis: (Your current logic).

- Reflection Loop (The Missing Step):

- Draft Plan based on

skills.md. - Critique Plan: "Is this plan optimal? Do I have the right memory?"

- Execute Skill.

- Draft Plan based on

- Post-Execution Verification:

- Did the API return a 200 OK?

- If no, retry or invoke error handling logic.

Implementation Strategy (The "Agent-Lite" Architecture)

You shouldn't just write text files. You should structure them programmatically to inject intelligence.

# pseudo-code for enhancing the 'skills.md' engine with Logical Orchestrator

class AgentOrchestrator:

def __init__(self, skills_source, memory_store):

# Load your current 'skills.md' concept here, but parse it as a JSON object

self.skills_catalog = self.parse_skills_md(skills_source)

self.memory = memory_store # Architectural dependency missing from basic .md setup

self.max_iterations = 5

def execute_task(self, user_input):

plan = self.agent_think(user_input)

# The 'agent_think' model now has access to memory, not just skills.md

for skill in plan:

if self.validate_skill(skill):

result = self.call_skill(skill)

# The Error Handling Loop (The power missing from static skills.md)

if result.is_error:

if self.check_memory("failed_skill_history"):

return "Already know this fails, retrying..."

self.memory.save_event(f"Failed: {skill.name}, Reason: {result.reason}")

return result.success

return "Task Failed"

def validate_skill(self, skill_name):

# Check if skill exists in your 'skills.md' catalog

if skill_name in self.skills_catalog:

return True

# Intelligent Fallback

print(f"CRITICAL: {skill_name} not found in skills.md. Is this a hallucination?")

return False

This snippet solves the "Mismatch" problem. It treats skills.md as a readonly API reference, while the orchestration layer handles logic.

🧑💻 Practical Value: How to Evolve Today

Don't throw away your skills.md yet. It is the foundation. Here is how to upgrade it to handle the "Can't Do" list above.

1. Move from Markdown to JSON Schema

Stop describing skills as paragraphs. Use JSON Schema definitions.

- Old Way: "This skill deletes users. You need the ID."

- Better Way:

Why: This allows your agent to feed this into an LLM with strict guardrails (Chain-of-Thought/CoT), ensuring it doesn't hallucinate input parameters.{ "skill_id": "delete_user", "type": "destructive", "requires_auth": true, "outputs_schema": { "status": "string" } }

2. Add a "Policy" Meta-Skill

Create a skills.md file labeled Policies.md.

- Define "Risk Levels."

- Define "Double Check" triggers.

- If your agent hits this file during execution, it pauses execution to ask for confirmation.

skills.mdis about what you can do;Policies.mdis about what you should do.

3. The 80/20 rule for SKILL branching

You don't need skills for everything. Identify your top 20% of skills that handle 80% of work. Centralize these. For less common tasks, keep them in deep skills.md pulling sources, but optimize the main path for speed.

⚔️ Comparison

| Feature | Traditional RAG (skills.md) | Advanced Agent Architecture |

|---|---|---|

| Memory | None (Stateless) | Persistent (Vector DB + SQL) |

| Error Handling | Handled by user prompt | Autonomous Retry & Reflection |

| Skill Discovery | Static Search | Dynamic Relevance Scoring |

| Output | Text & Data | Reasoned Action |

| Scalability | Low | High (Orchestrated) |

⚡ Key Takeaways

skills.mddefines Capabilities; an agent needs Orchestration to turn them into actions.- Static knowledge in

skills.mdcannot correct errors; you need aPolicylayer or a directError Handlerfunction. - Memory is the missing link. If your agent is stateless, it is effectively a dumb chatbot in a loop.

- JSON Schema your

skills.mddata to allow the LLM to parse inputs/outputs programmatically. - Reflection loops (thinking about the thinking) are what separate "tools" from "agents."

🔗 Related Topics

- Building Autonomous Agents with LangGraph: A System Design

- The Problem with Cursor Agents: Skills vs. Execution

- RAG vs. GraphRAG: Why Context Swamping Happens in Complex Apps

- Production-Ready LLM Guards: Protecting Your Agent Pipelines

🔮 Future Scope

The evolution of skills.md lies in Procedural Generation.

Instead of a human writing a skill, the skills.md system will eventually allow the agent to write the description of a skill it needs to achieve a goal, register it in its vector store, and immediately start using it without human intervention.

❓ FAQ

Q: Is skills.md bad for my AI agent?

A: No, it is a prerequisite. A skill-less agent is useless. However, relying only on skills.md creates a brittle system unable to handle real-world noise.

Q: What is the "Main Loop" of an agent vs. basic RAG?

The main loop of an agent is Plan -> Act -> Observe -> Reflect. Basic RAG is just Query -> Retrieve -> Answer. skills.md supports the Retrieve step, but misses the Loop.

Q: Can I use skills.md for memory?

Avoid it. skills.md files can grow very large, causing "context swamping." Use external Vector Stores (Milvus, Pinecone) for memory and skills.md for operational aids.

*Q: How do I debug 'skills.mdfailures?** *Log the prompts that the agent generates based on yourskills.mddefinitions. If the prompt is bad, yourskills.md` definitions are ambiguous.

🎯 Conclusion

skills.md is powerful, but it is static. It is the user manual; it is not the brain.

If you want to move from working on good demos to shipping production-grade automation, you must build the commitment layer (memory), the safety layer (verification), and the decision layer (orchestration) that sit on top of your skills.md definitions.

Don't just give your agent a list of skills. Give it a reason to use them responsibly.

Share This Bit