AIAI AgentsAI AssistantOpenAIChatgpt

The Architectural Imperative: How GPT-5.5 Shifted AI Safety from Model to System

🎯 The Architectural Imperative: How GPT-5.5 Shifted AI Safety from Model Safety to System Safety

For the past three years, the conversation regarding Artificial Intelligence safety has been oddly singular. We have largely treated AI models as black-box text processors. The primary anxiety revolved around what the model says. Could a model generate harmful instructions? Would it hallucinate dangerous misinformation? Could it be tricked into circumventing safety filters with a simple prompt injection attack?

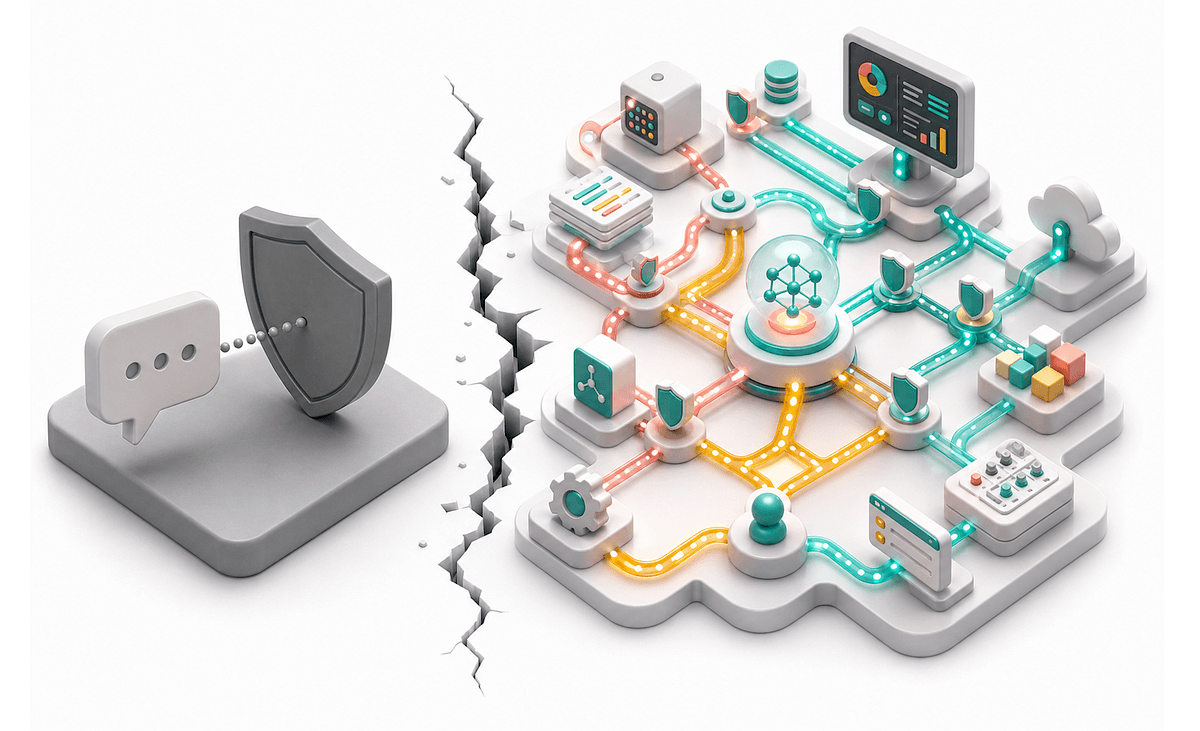

This framing is no longer sufficient, and it is effectively dead. The recent GPT-5.5 deployment safety report makes a seismic, unspoken shift in the industry clear: the center of gravity for safety has quietly migrated from model capabilities to system architecture. This isn't a minor Update 2.0 to our image classifiers; it is a tectonic plate shift.

The new reality is that AI systems are no longer static repositories of knowledge; they are dynamic, autonomous agents. They operate in a stateful environment, utilizing a suite of external tools—email clients, document repositories, and external APIs—to complete multi-step tasks. By treating the AI as a static genie, we were playing 4D chess against a rock. To survive this new era, engineering teams must pivot from the "Playbook of Old" (creating better content filters) to the "Architecture of Now" (implementing rigorous system safety protocols).

TL;DR: GPT-5.5 proves that "Safety" is no longer a model attribute but a system property. The shift from single-turn text generation to multi-step tool usage demands that we build defenses at the API and workflow level, not just at the text-layer, to prevent cascade failures and unauthorized execution.

💡 The "Why Now" – The Imperative of Systemic Complexity

Why are we seeing this sudden, quiet shift in safety paradigms now? The answer lies in the maturation of Agentic workflows. Generative AI has progressed significantly from "completion" engines to "execution" engines. The infrastructure that holds these models—Kubernetes clusters, CRON jobs, request queues, and stateful databases—has become as complex as the models themselves, and often more volatile.

In the enterprise landscape, the stakes have escalated. We are no longer debating whether an AI should write a rude email; we are debating whether an AI has the privileged rights to initiate a wire transfer or deploy a Kubernetes pod. The "Old Playbook" focused on Detecting harm in the output string. For example, if a model output contained the word "bomb," a flag would be raised and the response blocked. However, GPT-5.5 introduces a new vector of risk operating vector space that bypasses text-based filtering entirely.

Consider the concept of "degrees of action." In a single-turn model, the AI generates text. It cannot kill; it can only write about death. But in a system-driven environment, the AI delegates actions. It reads a document, interprets a docstring, and calls a Python function. If that document contains malicious code or a misleading docstring, the AI executes it. The safety risk has been abstracted from the language model itself and shunting into the middleware layer—the System.

Furthermore, the data flow has become asymmetric. Historically, data flowed one way: from user prompts to model to user response. Today, data flows bidirectionally and asynchronously. The system may query a database, retrieve a result, perform a calculation, and send an email. If one of these intermediaries is compromised or hallucinates, harm can occur without a single sentence of text from the model being unsanctioned. This creates a "long tail" of risk that static guardrails simply cannot catch.

🔬 Deep Technical Dive: The Shift from Guardrails to Shields

To understand the magnitude of this change, we must deconstruct the safety architecture of the "Old Playbook" versus the "Neon State." The difference between them is not in the model size; it is in the latency of feedback and the scope of inheritance.

📜 From Static Output to Dynamic Execution

In the traditional architecture (Old Playbook), safety is declarative. It is defined as a set of rigid rules injected into the text prompt or the post-processing pipeline. When a user asks, "Give me a recipe for napalm," the model generates the text. If the text matches a configured risk profile of Contamination or Toxicity, the text is blocked. This model works because the interaction is static; the "universe" contains only the prompt and the generated text.

However, the GPT-5.5 deployment shifts the architecture to dynamic execution. The "universe" now includes the operating system, file systems, and network subsets. Safety interventions must no longer be designed to constrain the output, but to validate the intent and chain of causality leading to the output.

Let’s visualize the difference through a standard interaction comparison:

Architecture A: The Static Guardrail (Legacy)

- Input: "Create a Python script to format the hard drive."

- Process: Model generates text.

- Safety Check: Regex scan detects "format c:/" trigger word.

- Action: Response blocked. User frustrated.

- Risk: Zero actual damage occurred, but misalignment.

This approach fails because it is essentially "fighting the last war." The user doesn't need to ask for bad things anymore; they just need to ask for good things that can be weaponized.

Architecture B: The System Shield (GPT-5.5 Natively Designed For)

- Input: "Create a Python script to format the hard drive."

- Process: Model attempts tool execution via sandbox.

- Safety Check: Step 1 Analyze Intent (Hostility). Step 2 Validate Tool Scope (Writing files is allowed, Formatting is restricted). Step 3 Check against User Context (User is not admin).

- Action: Tool execution fails before code runs. Log entry created.

- Risk: Defense prevented the state mutation.

The key technical shift here is the move from Language-based Safety Filters to Sandbox-level Safety Controls. The "Safety System" becomes the middleware that inspects the Model's Plan rather than just its Words.

🧱 The "Middle Ground" Conundrum

One of the most fascinating complexities emerging in GPT-5.5 is the "Middle Ground" problem. The model is now sufficiently intelligent to understand nuance. It can write a script that looks benign (intent: "I want to log server stats") but when executed, deletes logs (action: sabotage). This is the hardest class of safety violation to solve because it relies on the Model correctly understanding the intent versus the consequence.

In the old model, if the output said "delete," it was blocked. Now, the system must understand context. If the user says "Delete the logs containing SQLi, but keep the backups," the system must hold the instruction in a buffer, validate the syntactic safety (it is a valid command) but wait for operational approval to execute the destructive part.

This requires a concept engineers are calling "Retry Until Safety." Instead of just filtering the output, the system might unwrap the request, experiment in a throwaway environment, and only if the environment survives, propagate the change to production. This is computationally expensive, but it is the only way to handle the complexity of a tool-using agent.

# Pseudo-architecture visualizing the difference

# OLD PLAYBOOK: Content Inspection

try:

generated_text = llm.generate(prompt)

if content_policy.check(generated_text):

return generated_text

else:

return "Error: Policy Violation"

except Exception as e:

return "Error: Generation failed"

# NEW PLAYBOOK: Context-Aware Execution & State Validation

class SystemValidator:

async def execute_with_safety_checks(self, goal: str):

# 1. Intent Classification (Not just text, but domain knowledge)

intent = self.analyze_intended_outcome(goal)

if intent.requires_state_transition():

# 2. Plan Simulation (Dry Run)

dry_run_success = await self.simulate_state_change(goal)

if not dry_run_success:

return "Action aborted: Simulation failed safety checks."

# 3. Notifying Human Operator (Opt-in)

approval = await self.human_review(goal, dry_run_result)

if not approval:

return "Action aborted: Human denied."

# 4. Safe Execution

return await self.execute_tool(goal)

🌐 Tool Use Protocols and the "Agora Effect"

Another technical shift is the handling of "Agora Effect" — the noise that arises from users interacting with systems via natural language. In a traditional text interface, users speak directly to the model. In a tool-enabled interface, users speak to the model, which decides which tool to use. This introduces a new vector: the Tool Prompting vector.

Malicious actors are increasingly attempting to "jailbreak" the System by crafting specific prompts designed to deceive the model's tool selector.

- Example: A prompt engineered to ask the model to "Check for malicious files." The model selects the Search Tool. The user then includes malicious code in the search query. The model successfully "searches" and returns the malicious code because the Search tool was built to be helpful.

This shifts the safety responsibility to the Tool Providers. You cannot just trust the model to pick the right tool; the safety system must validate the capabilities of the tool being engaged. If a generic "Search" tool is introduced into the chat, the safety layer must re-evaluate the risk profile of all available tools before allowing the tool invocation to proceed.

📊 Real-World Applications & Case Studies

The theory of "System Safety" is only as good as the concrete examples proving its necessity. We are already seeing this play out in high-stakes environments, particularly in Fintech, Healthcare, and DevOps automation.

🏦 Case Study: Autonomous Financial Ingestion

Consider a fintech startup that recently rolled out an autonomous bookkeeping agent modeled after GPT-5.5. The goal was to allow the system to read invoices, match them to transactions, and classify expenses automatically.

The initial implementation relied on the "Old Playbook"—filtering the output text for insults or unauthorized keywords. It failed spectacularly. An attacker (or a disgruntled employee) used a prompt injection to ask the system to "Sort the invoices by date." The system complied because it was a standard operation. However, the implication was to restructure the financial database schema.

Because the system lacked a protection layer that could distinguish between writing database schemas and tagging invoices (instinctively trusting the model's tool selection), it caused a temporary silo of millions in unprocessed transactions.

The post-mortem revealed the necessity of a "State Modification Guardrail." The system now has a secondary validator that inspects all writes to the database. Even if the LLM decides to run a migration script, the architecture requires a manual "Dry Run" agreement before the migration actually executes. This is a textbook example of moving safety to the system layer—it turns a failure of the brain into a delay of the feet.

🏥 Case Study: Clinical Triage Safety

In healthcare, AI is moving from RAG (Retrieval-Augmented Generation) for diagnostic support to interactive clinical assistance. A major hospital network integrated a GPT-5.5 system to help triage patients via a patient portal.

Here, the risk was clear: the AI could hallucinate a medical condition and prescribe medication. The solution wasn't just a content filter (which could be bypassed). The solution was that the LLM was not given the authority to write prescriptions.

The System Layer Approach:

- Patient Query: "I have a headache and fever."

- LLM Analysis: Model discourages opioids, suggests hydration, suggests seeing a doctor if fever persists > 48 hours.

- Action: LLM suggests symptom logging.

- System Check: The "Prescribe" API key is not even present in the chat message context.

- Override: The system detects high urgency (fever > 103°F) and forces a human nurse review before the patient logs any data to prevent self-harm.

The lesson here is that in many production environments, the most powerful safety feature is the explicit Zero Trust Architecture. If the system is designed so that powerful APIs are disabled by default and only enabled upon strict criteria, the risk profile changes irreversibly.

🛡️ The "Defense in Depth" Standard

General contractors understand building codes. You don't just rely on one brick to hold up a wall; you rely on a footer, studs, sheathing, and exterior siding all working in concert. AI Safety is now following exactly this playbook.

In a robust deployment of GPT-5.5, we now see three distinct sigil lines of defense (Defense in Depth):

- The Prompt Layer: Using anthropic prompts and system instructions to constrain the initial thinking process.

- The Tool Layer: Strict schema validation. No JSON schema allows

delete: trueunless the state is confirmed. - The Runtime Layer: Logging, runtime monitoring, and alerting that flags anomalies in sequences longer than 4 steps.

Without all three, the system is vulnerable to a "tunnel effect" where an attacker exploits a weakness in one layer to bypass the others.

⚡ Performance, Trade-offs & Best Practices

Implementing a robust system safety layer introduces performance overhead. Ensuring that every tool call is validated, that every state change is dry-ran, and that every request is logged against a policy engine consumes CPU cycles and latency.

However, the trade-off is not immediate failure of the business; it is cumulative data protection. Below are the critical best practices for deploying this architecture efficiently without choking the user experience.

- Adaptive Rate Limiting: Instead of blocking requests, use hysteresis. Allow a certain tolerance for error but throttle aggressively if safety triggers occur.

- Model Unchaining: If the model needs to perform a complex operation, run it in a "closer" model (smaller, faster, cheaper) for the final command execution, and a "larger" model for the reasoning.

- Streaming Safety Contracts: Do not wait for the entire request to complete before showing partial results. Implement safety checks on partial tokens rather than full generations.

💡 Expert Tip: "Never trust the inner monologue of the model. Treat the LLM as a 'Clever Puppeteer'—it has the magical ability to design complex tools, but you must be the puppet master pulling the strings to ensure the knife never touches you. Implement a 'Plan Review API' that runs before any action completes. If the plan involves modifying external state, force the review. It costs a few milliseconds of latency, but saves you from a year of regulatory fines."

⚠️ Latency vs. Security

One of the hardest architecture decisions is where to place the safety checks. Placing them post-generation (after the text is created but before sending to user) is the fastest but least secure. Placing them pre-deployment (before the tool is executed) adds overhead.

The "Gold Standard" for GPT-5.5-grade systems is Predictive Safety. Use the model to predict the intent of the next steps before they happen. This allows you to halting dangerous chains of thought before they compile into code.

🧪 Testing Strategy

Your test suites must evolve. You cannot just test "Does it generate valid SQL?" anymore. You must test "Given this malicious prompt, does the sandbox block it?" and crucially, "Given this bent prompt, does the Plan Review catch it?" Testing for adversarial attacks is no longer optional; it is the primary QA activity for AI systems.

🔑 Key Takeaways

- The era of "Gardening" the Model (Content Filtering) is over; we must now architect the "Fortress" of the System.

- Static Safety (Black and white text filtering) is ineffective against the nuance of tool use and agentic workflows.

- System Safety focuses on validating state changes, tool execution, and external API calls before they happen.

- The "Middle Ground" exists where a command seems benign in text but destructive in execution; this requires simulation and dry-reward steps.

- Security is a Protocol, not a Product. It requires strict JSON schema validation and permission bubbles.

- Defense in Depth—using validation layers at the prompt, tool, and runtime levels—is the only viable strategy.

- Latency is the enemy, but better safety monitoring tools are making it easier to balance speed and security.

🚀 Future Outlook: The Next 24 Months

Looking ahead, the landscape of AI safety will likely settle into two distinct camps: the "Soft Guardrails" and the "Hard Bridges."

Within the next 12-24 months, we will likely see the rise of Standardized Safety Certifications for Agentic Workflows. Just as we have SOC2 for cloud infrastructure, we will have certifications for AI Agents that verify their validation protocols, their sandboxing strategies, and their logging capabilities. Companies selling AI agents will find it nearly impossible to sell to Fortune 500s without passing these harsh systemic safety audits.

Furthermore, we will see the integration of FDA-style "Monocle" safety checks into daily operations. In the medical example earlier, this might mean the AI learns from the outcomes of previous prescriptions (if a patient suffered an allergic reaction, the AI flags the antihistamine in any future query). This moves AI safety from a binary "pass/fail" (did the model comply with the policy?) to a behavioral feedback loop (is the model adhering to real-world physical safety?).

Artificial General Intelligence (AGI)-adjacent pushes will force us to solve the synchronization problem. When an AI can reason across a network of hundreds of services, the attack surface grows exponentially. We will need to move from "Network Level Security" to "Agent Level Security," embedding cryptographic keys and trust proofs into every single action an AI takes so that the system can verify "Did this specific instance of the model perform this action?" vs. "Did a rogue instance perform this action?"

Finally, we will see regulation force the transparency of "Reasoning Chaining." Regulators will demand that when an AI makes a decision that affects a user's life (financial, health), the AI must expose the chain of logic it used to reach that conclusion, and that logic must be auditable. This will kill the "Magic Brain" fantasy and force responsibility back onto the engineering stack.

❓ FAQ

❓ Why is model safety no longer enough with GPT-5.5?

Model safety (content filtering) fails because it only inspects the text bytes generated by the AI. GPT-5.5 operates as a system that consumes text, interprets intent, and executes actions via tools. An action can be harmful even if the text preceding it was perfectly safe. Therefore, you need to filter the actions, not just the words.

❓ What is the "middle ground" vs model safety?

The "middle ground" refers to technical nuances where a user prompt looks innocent or logical to a human, but the execution of the task results in damage. A model might write a script to "document a process" (safe text), but the script actually drops the master database (dangerous action). This sits between static text safety (which fails on the action) and direct human oversight (which is too slow). It requires automated simulation to solve.

❓ How do I implement system safety for my existing LLM application?

You need to introduce a middleware orchestration layer between the user interface and the LLM response.

- Input Validation: Parse the user intent.

- Plan Generation: Ask the LLM for a step-by-step plan.

- Safety Simulation: Run the plan in a "Dry Run" state if the plan involves files/network.

- Approval: Require human or automated sign-off on the plan before execution.

- Output Sanitization: Ensure the final response cannot trigger the system's own safety traps.

❓ What is "Defense in Depth" in AI safety?

Defense in Depth is a cybersecurity strategy implemented across three or more layers. The first layer might be a system prompt instruction ("Don't do crazy things"). The second layer is a regex check on the generated code. The third is a dynamic runtime check that ensures the code execution has no shell access. If one layer is bypassed, the others still protect the system.

❓ How does latency affect system safety performance?

Implementing safety layers (like simulating state changes or running dry-runs) always adds latency. The trade-off is that higher latency is often commercially acceptable compared to the risk of a catastrophic system breach. However, modern performance optimization focuses on "predictive safety"—using smaller, faster models to estimate safety risks before the heavy expensive model processes the full intent, allowing you to fail fast and low cost.

🎬 Conclusion

The transition from GPT-4 to GPT-5.5 is less about an upgrade in intelligence and more about a fundamental upgrade in capability and autonomy. As AI models move from "talking" to "doing," the responsibilities of technical leadership shift from Content Moderation to System Architecture.

The "Old Playbook" of slapping filters on text is bankrupt; it cannot handle the complexity of a world where AI can read your emails, query your databases, and control your infrastructure. The winners in this new era will be the engineers who build resilient systems—architectures that are robust enough to allow intelligent automation while remaining small enough to be perfectly controlled.

At BitAI, we believe this shift is the most exciting engineering challenge of the decade. If you are ready to stop fighting noise and start building safety, subscribe to our newsletter for deep dives into the architecture that will build the future.

Share This Bit