DeveloperBackend Engineer

The New Backend Engineer Role: Why You Must Review AI Code for Technical Debt

🚀 Quick Answer

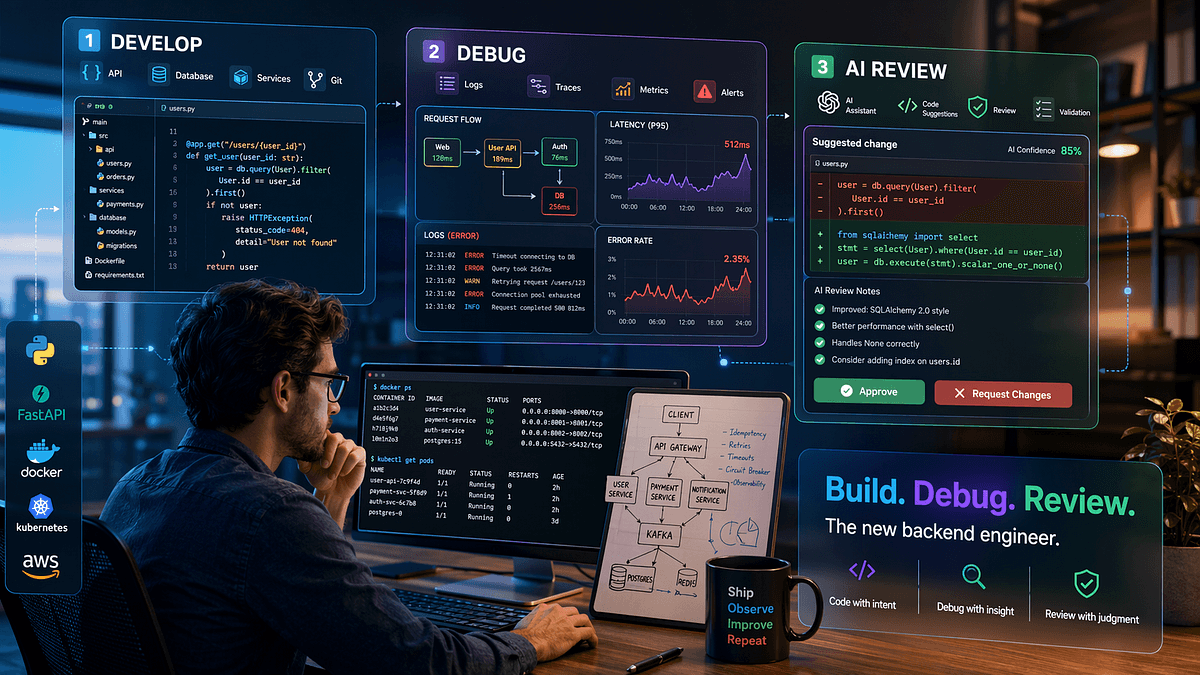

- The Hybrid Role: The modern backend engineer must act as a builder, debugger, and AI code reviewer simultaneously.

- Shift in Priority: Technical skills are no longer about syntax; they are about intent verification and production safety.

- The Problem: Over 84% of developers now use AI, but a massive gap remains between "correct syntax" and "production-ready stability," leading to technical debt.

- The Solution: Engineers must implement manual review protocols and understanding of LLM-generated architecture before deployment.

🎯 Introduction

The modern backend engineer job description isn't what it used to be.

Gone are the days when your worth was determined solely by how fast you wrote a REST endpoint or how proficient you were in a specific ORM. In the current market, the engineer who succeeds isn't just the one who builds systems, but the one who curates them.

The new backend engineer is a hybrid role requiring unique technical depth, specifically in automation and AI code review. We are seeing a shift in developer workflows where architectural competence is tested against the durability of AI-generated solutions. If you aren't trained to audit Large Language Model (LLM) outputs for subtle logic flaws, you are already falling behind. This isn't just about adopting tools; it's about preserving system integrity in an era where "working code" is being written at machine speed.

🧠 Core Explanation

Traditionally, backend engineering was a linear process: Design $\rightarrow$ Code $\rightarrow$ Test $\rightarrow$ Ship.

Today, that pipeline has fractured into a complex web of human oversight and machine generation. The core explanation for this shift is the arrival of Generative AI in source code editors.

We are moving from "writing code" to "orchestrating code." The modern backend engineer's value proposition has shifted from creation to judgment.

When an LLM generates a database schema or a complex Python async function, it often prioritizes correctness over resilience. This is where the engineer's role changes chemically. You are no longer just writing SQL queries; you are acting as a safety inspector. You have to look at code that has high confidence scores, has zero syntax errors, but might introduce memory leaks or create a security vulnerability (like "prompt injection" logic in custom code). The modern engineer acts as a filter, ensuring that the raw creativity of the AI is tempered by the rigidity of real-world constraints.

🔥 Contrarian Insight

"AI won't replace backend engineers, but engineers who treat AI as a semi-colon will."

This is the critical trap. Most developers fear AI will take their job because they are trained to be habit-spotters (i.e., I didn't strictly Follow The Company Style Guide, so do not let me write). AI breaks this monopoly on syntax.

The real threat isn't a few lines of C++ or Go. It is entropy. AI generates code that works on isolated test cases but fails under load. If you are relying on AI to maintain the "back office" logic of your system without a human understanding of the data flow, you aren't engineering; you are digital gardening a garden of bugs that you cannot identify.

🔍 Deep Dive / Details

The Frustration with "Almost Right" Code

According to recent industry trends, developer productivity has jumped, but operational stability is flattening out—or declining. The "LLM Hallucination" problem isn't just about hallucinating facts (like dates in a webhook payload); it's hallucinating correctness.

Architectural Risks Introduced by AI:

- Context Window Overload: LLMs generate recursive functions or infinite loops easily, draining system resources if left unchecked.

- Dependency Bloat: Generators love third-party libraries. This increases "GDPR risk" and "vendor lock-in" significantly compared to writing native logic.

- Lack of Security Context: LLMs do not inherently understand your specific security policy (SAST warnings). It needs a human operator to interpret these.

In Real-World Usage...

A legacy system built in 2019 is easy to touch up. It has patterns. A API generated by GPT-4 for a microservice built in 2024 might look syntactically perfect but violates the specific idempotency rules of your payment gateway. The hybrid engineer must be comfortable debugging latency spikes that don't show up in unit tests because the AI decided to add an HTTP call to a third-party API that has a 50ms jitter, breaking the observed "consistency."

🧑💻 Practical Value: The "Guardrail" Workflow

You cannot review AI output blindly. You need a workflow.

Step 1: Audit the Architecture Before Code Generation Don't just ask the AI "write a backend service." Ask, "Design a backend service that handles 10k RPS with eventual consistency." If you don't define the constraints up front, the AI will give you the simplest, lowest-effort solution, which usually fails at scale.

Step 2: Implement "LLM Detective" Rules After the code is generated, don't run tests immediately. Run an audit. specifically look for:

- Hardcoded Secrets: (Basic, but AI often forgets to replace placeholders).

- Race Conditions: Check if async code handles unhandled rejections.

- SQL Injection: If querying, ensure the AI params-escaped correctly.

Step 3: Unit Test Injection Write "adversarial tests." Ask the AI: "Generate a unit test for this function that tries to crash it." If the AI's code can't handle edge cases defined by itself (self-referential testing), reject the code immediately.

⚔️ Comparison: The Old vs. New Engineer

| Feature | Traditional Backend Engineer | The New Hybrid Engineer (2026) |

|---|---|---|

| Primary Goal | Speed & Syntax: Fast feature delivery. | Judgment & Safety: Preventing technical debt. |

| Tooling | IDEs, GitHub, Postman. | LLMs, RAG Systems, Observability Tools. |

| Upside | High velocity in greenfield projects. | High stability, lower maintenance costs. |

| The "Catch" | Knowledge silos. | Cognitive load & demand for abstract reasoning. |

⚡ Key Takeaways

- The modern backend engineer must shift focus from writing to auditing AI-generated capabilities.

- AI outputs are prone to architectural shortcuts that cause performance and security issues.

- Technical Debt is now the #1 risk of using Generative AI if verification steps are skipped.

- Success requires understanding system design to catch holes in AI logic.

🔗 Related Topics

- How to Audit LLM Code Outputs for Production Safety

- Managing Technical Debt in AI-Driven Development

- System Design for High-Concurrency Backend Services

- Serverless Architecture Trade-offs for Developers

- Why Tracing is Critical in Modern Microservices

- AI Prompt Engineering for Code Generation

🔮 Future Scope

We are entering the era of "AI-Native Engineering". The backend engineer won't write code line-by-line; they will write intelligence. The tools that embed verifiers, real-time syntax checkers, and regression test runners directly into the generation pipeline are just emerging. Your role is to sit between the Python script and the server rack, ensuring the "intelligence" flowing through your pipes actually means something.

❓ FAQ

Q: Is the backend engineer role evolving into automation? A: Partially. The clerical part of writing boilerplate (APIs, CRUDs) is being automated. The human role is evolving into system design and verification.

Q: How does AI code generation increase technical debt? A: AI prioritizes getting it to work over staying maintainable. It often selects new, obscure libraries (creating dependency debt) or writes "clever" but hard-to-decrypt logic (creating comprehension debt).

Q: Do I need to know how AI models work to be a modern backend engineer? A: You don't need to know the math, but you MUST understand constraints. You need to know that an AI has limited context and can hallucinate, which fundamentally changes how you structure backend schemas.

🎯 Conclusion

The backend engineer of today is fundamentally different than the past. You are no longer just a translator of business logic into code; you are the custodian of your system's sanity. By embracing the "Three-Part Role"—Developer, Debugger, and AI Reviewer—you ensure that your scalability doesn't come at the cost of reliability. Maintain your judgment, and you remain irreplaceable in the AI age.

Share This Bit