AIAI AgentsAI Assistant

🤖 The Sovereign AI Revolution: Inside Mozilla's Thunderbolt Strategy

🤖 The Sovereign AI Revolution: Inside Mozilla's Thunderbolt Strategy

For the last two years, the global software narrative has been dominated by a singular, seductive promise: the democratization of artificial intelligence through the palm of your hand. From smartphone notifications that summarize your emails to cloud-native assistants that code entire applications, the AI era has been defined by sovereign AI. Yet, as we look ahead from the vantage point of 2026, the narrative is starting to fracture. The honeymoon period with centralized, cloud-based Large Language Models (LLMs) is ending, replaced by a cacophony of concerns regarding data privacy, exorbitant API costs, and vendor lock-in.

Enter Mozilla—once the undisputed champion of the open web—and its latest disruptive entrant into the tech heavyweight circle: Thunderbolt.

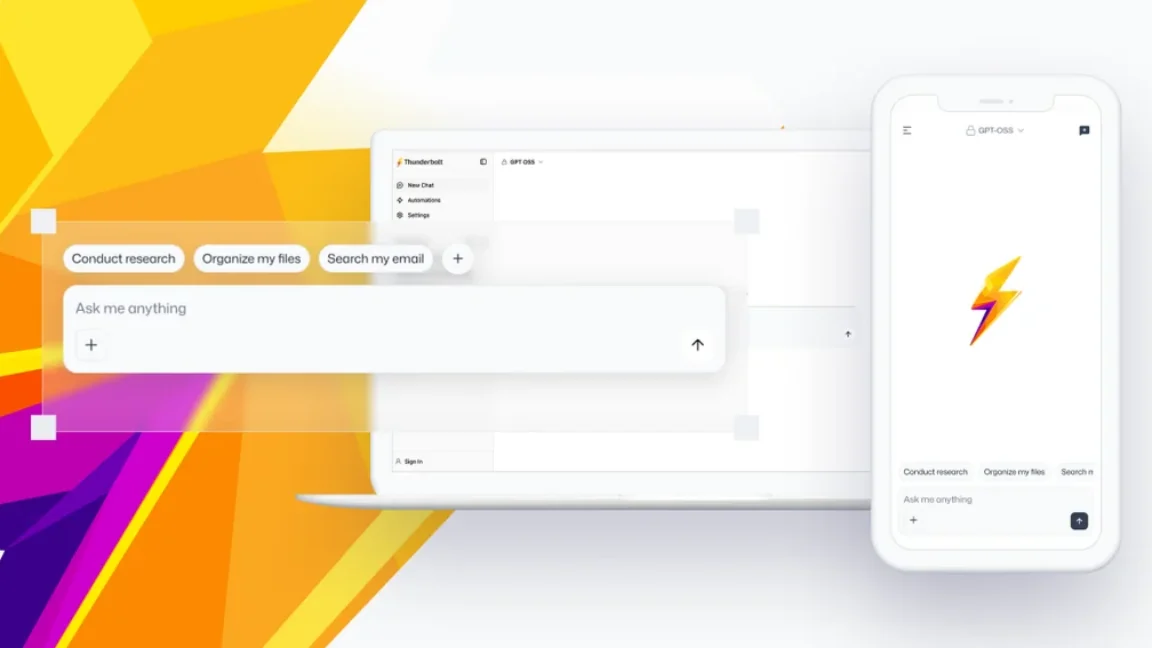

It is not a model. It is not a singular foundation. Instead, Mozilla has unveiled a sophisticated, modular interface designed to decouple the user experience from the underlying silicon. Thunderbolt is positioned as a client for the future: a "sovereign AI client" that empowers enterprises and power users to run their own intelligent pipelines without surrendering their data to third-party databases. In an industry drowning in proprietary black boxes, Thunderbolt offers a breath of clean air. This isn’t just about browsing the web anymore; it is about controlling the architecture of intelligence itself.

TL;DR: Mozilla’s new Thunderbolt client represents a paradigm shift away from centralized SaaS models toward self-hosted, sovereign infrastructure. Built on the Haystack framework, it allows enterprises to run agentic workflows locally while maintaining strict control over data and costs, tackling the "black box" failure of modern AI.

💡 Why This Matters Right Now

We are currently witnessing a bifurcation in the artificial intelligence market that mirrors the early days of the internet: the rise of the "Platform" AI and the rise of the "Sovereign" AI. For years, everything moved to centralized platforms—Amazon Web Services, Google, Microsoft Azure. We saw the same dynamics in search and social media. The convenience of centralized models led to a massive over-reliance on APIs like OpenAI’s GPT-4 and Anthropic’s Claude. However, as of 2026, the "latency tax" and the "data premium" have become too expensive for industries like healthcare, finance, and government.

The importance of self-hosted AI infrastructure cannot be overstated. It is the only viable path for industries bound by compliance. When a pharmaceutical company uses an AI to analyze drug interactions, they are legally prohibited from sending that proprietary chemical data to a public cloud provider. Thunderbolt addresses this by acting as the pivot point. By being built on the Haystack framework—a portal that allows users to choose their own components—it removes the vendor lock-in that has plagued the sector. The "Why Now" is the maturation of open-source models; models like DeepSeek and Llama 3 have capstone capabilities that make running them locally not just viable, but preferable for specific domain tasks.

🏗️ Deep Technical Dive into Thunderbolt's Architecture

To understand the magnitude of Thunderbird’s new sibling, we must peel back the hood. Thunderbolt is not a simple GUI wrapper around a chatbot; it is a deep integration into the AI operational stack. At its core, it relies on Haystack, a robust open-source framework that facilitates the composition of neural networks. Let’s dissect the critical layers of this architecture.

🧑 🧠 The Haystack Foundation: The Modular Brain

Think of Haystack as the plumbing system for AI intelligence. In the past, if you wanted to build an AI system that could read a PDF, summarize it, and then query a database for specific figures, you had to stitch together disparate scripts or beg a vendor for a custom integration. Haystack allows developers to build modular AI pipelines—pipelines that consist of retrievers, readers, and generators—using Python.

Thunderbolt acts as the high-fidelity dashboard for this plumbing. It handles the orchestration, user input streaming, and output rendering, allowing developers to focus on the pipeline logic itself. This modularity is a feature, not a bug. It ensures agility. When a new state-of-the-art model is released tomorrow, enterprise users do not have to wait for an upstream update; they can swap out the "top" of the pipeline—the generator component—without rebuilding the entire stack.

🔌 🔌 The ACP Agent Protocol: The Language of Agents

One of the most fascinating technical components of Thunderbolt is its support for the Agent Communication Protocol (ACP). In the current AI landscape, "agents"—AI that performs autonomous tasks—are the next big step beyond chatbots. However, these agents often speak very different "dialects." A legal agent might use JSON for claims, while a marketing agent might use Markdown.

ACP acts as the universal translator. It facilitates seamless communication between different agents. If you ask Thunderbolt to "research and summarize this," it can unleash a "Research Agent" (for browsing/internal web) and a "Summarizer Agent" (for text processing) that work in concert without collisions. This interoperability is vital for the "cross-device workflows" mentioned by Mozilla, allowing a workflow initiated on a Mac to finish its calculations on a local Linux server without data fragmentation.

💾 💾 SQLite as the Local Source of Truth

The architecture leans heavily on distributed storage. While the cloud offers infinite scalability, it offers no privacy. Thunderbolt insists on an offline SQLite database as the authoritative source for enterprise data. This creates a fascinating tension and cooperation between local caching and remote retrieval.

When a user poses a query, Thunderbolt’s retrieval system first queries the local SQLite index. If the answer is not found, it triggers a remote call using OpenAI-compatible APIs (like DeepSeek or OpenCode). This hybrid approach means the "source of truth" for sensitive proprietary data remains strictly on-premise, while general knowledge is fetched from the vast ocean of the open web. It effectively smoothes out the "black box" problem by ensuring that unlike the GPT-4 "web-browsing" mode, no context windows are pre-filled with proprietary trade secrets before the query even happens.

🧱 🧱 Architecture Diagram (Conceptual)

Here is a simplified representation of how the data flows through the Thunderbolt client:

graph TD

A[User Input] -->|Chat Interface| B(Thunderbolt Client React App)

B -->|Context/Query| C{Asking the Model?}

C -->|Locally Available| D[SQLite DB (Source of Truth)]

C -->|Not Found/General| E[Haystack Pipeline Manager]

E -->|Route| F{Open Compatible API?}

F -->|Self-Hosted Llama/Claude| G(Local GPU/TPU Inference Engine)

F -->|Remote Public| H[Cloud Provider / DeepSeek]

D --> I[Local Inference Engine]

G -->|Response| B

H -->|Response| B

I -->|Contextual Response| B

📊 Real-World Applications & Case Studies

The theoretical benefits of sovereign AI must be grounded in utility. Here is how Thunderbolt’s architecture could unseat legacy players in sensitive sectors.

👩⚖️ 🏢 Case Study: The "Black Box" Audit

Consider a multinational law firm. Traditionally, they would have to use tools like ChatGPT Enterprise to analyze contracts, worrying about the possibility—however remote—of their deposition data leaking into the training set. With Thunderbolt integrated into their local infrastructure, the firm can configure an agent to perform "eDiscovery."

The agent accesses the SQLite local repository containing only redacted or anonymized contracts. The agent can then cross-reference clauses against a local database of common 2026 legislative updates. Because the data never leaves the firm's local network, the risk profile disappears. This is not just a feature; it is a competitive moat. The firm can now use LLMs to handle hundreds of thousands of legal documents in hours rather than weeks, all while adhering to GDPR and regional data sovereignty laws.

🤖 💊 Case Study: Adjunct Pharmaceutical Development

In biotech, speed is currency. Researchers rely on parsing vast amounts of lab notes written in fragmented, messy formats. Cloud AI tools are often too generic; they don't possess the specific domain knowledge needed to synthesize protein structures.

Using Thunderbolt’s "Search and Automation" workflows, a lab could stream their raw experimental data directly into a local pipeline. A locally run model (like a distilled version of a protein folding model) could ingest this data and provide real-time feedback on reaction viability. This workflow ensures that cutting-edge intellectual property—potentially worth billions—is never exposed to a third-party API. The tool is faster because the inference happens on local hardware, and it is safer because the data is the firm's own.

🌐 🌐 Cross-Device Workflow Orchestration

The true power of Thunderbolt reveals itself in cross-device workflows. Imagine a developer working on a MacBook Pro for coding and a high-performance Linux workstation for compilation. Currently, context is lost when switching interfaces. With Thunderbolt, a "context window" can be maintained as an encrypted state across devices. If a developer documents a bug on their phone, the Thunderbolt client on the Linux workstation sees the update, accesses the local codebase (via SQLite), and continues the debugging chain. This creates a fluid, continuous thread of agency that asynchronous email or Slack does not provide.

⚡ Performance, Trade-offs & Best Practices

Migrating to a sovereign architecture requires sacrifice. It is not magic; it requires hardware and strategy. There are significant trade-offs to consider before dumping AWS for a local GPU rack.

📊 The Hardware Reality Check

The primary trade-off is performance vs. privacy. Public cloud APIs are Llama-3-tier optimized on massive GPUs (H100s/A100s) located in data centers with 100Gbps fiber backbones. A local inference engine running on consumer-grade hardware (like an RTX 4090 or Apple Pro chips) will be significantly slower and less capable of handling massive context windows.

- Latency: Local inference can be slower than real-time streaming APIs.

- Token Limits: You are bound by your local VRAM. You cannot paste a 5-million-token engineering document; you typically squeeze it into 32k or 128k tokens.

- Expert Tip: "Always implement a tiered response strategy. Frame Thunderbolt to bypass the local model for summarization tasks and route only the high-value reasoning and coding tasks to the self-hosted GPU. This balances the cognitive load on your local hardware with the speed of cloud APIs."

🔧 Best Practices for Implementation

- Infrastructure as Code (IaC): Use Terraform or Ansible to script the deployment of your thunderbolt instance. Standardize the Haystack pipeline configuration so that every node in your organization runs the same "genome" of intelligence.

- API Gateway Governance: Even though you are self-hosting, route all external calls through a local API gateway to enforce safety filters. Thunderbolt can send a request to DeepSeek, but your gateway can scan the output for PII (Personally Identifiable Information) before it lands in the user's chat interface.

- Regular Hygiene: Self-hosted models degrade if not retrained or fine-tuned. Set up automated cron jobs to ingest new enterprise data into your SQLite local database to keep the model's knowledge fresh without re-uploading to the cloud.

🔑 Key Takeaways

- 🔓 Decoupling UI from Core: Thunderbolt proves you don't need a massive model to have a great interface. By creating a specialized front-end for open-source components, Mozilla has solved the user experience problem for an otherwise technical market.

- 🛡️ Data Sovereignty is Real: For high-value industries, the risk of data leakage outweighs the cost of cloud computing. Thunderbolt offers a viable on-ramp to the "zero-knowledge" AI era.

- 🧱 Modularity is Key: The shift to Haystack-based pipelines allows enterprises to mix and match models. Why pay for a generalist model when you only need a specialist for SQL?

- 🔌 ACP and Interoperability: The Agent Communication Protocol standardizes how AI agents talk to each other, reducing complexity in building agentic workflows.

- 💾 The Local Source of Truth: Integrating SQLite as the primary data layer ensures that user history and corporate data remain proprietary.

- 🚀 Distribution: With native apps for iOS, Android, iOS, and Web, Thunderbolt is positioning itself as the "Mail.app" of the AI age—a utility app used daily but rarely noticed because it just works.

- 💰 Cost Efficiency: While initial hardware investment is high, the model is "pay once, use forever" (within capacity limits), offering a long-term economic hedge against rising API costs.

- 🔐 Security by Default: The focus on device-level access controls and optional E2EE means that IT managers can sleep easy knowing their data isn't floating around the internet.

🚀 Future Outlook: The Decentralized Web Good

Mozilla’s push for Thunderbolt is part of a broader, 2026-visionary declaration: "Do for AI what we did for the web." The web grew through open protocols like HTTP and HTML, preventing any single company from owning the internet's infrastructure. The AI web can suffer the same fate if we don't keep a leash on the model providers.

In the next 12 to 24 months, we will likely see a watershed moment for edge AI. Thunderbolt’s architecture is perfectly suited to run on edge devices—smartphones and laptops that handle the inference locally rather than sending data to the cloud. As Neural Processing Units (NPUs) become standard in consumer hardware, running a 7B parameter model locally will be as easy as checking email.

Furthermore, we expect to see "Mozilla.ai" evolve into an open-source marketplace. Just as you can download a plugin for Firefox, developers will create "Blueprints" for Thunderbolt—a pre-configured pipeline that sets up a fully optimized "CEO Assistant," "Legal Defense," or "Coding Companion." The distinction between the "client" and the "model" will eventually dissolve, creating a peer-to-peer network of intelligent agents that support each other, rather than a hierarchy of master and servant.

❓ FAQ

Is Mozilla Thunderbolt compatible with every AI model? While Thunderbolt uses an "OpenAI-compatible" API layer to route requests to models like DeepSeek and OpenCode, it is fundamentally built on the Haystack framework, which supports open-source models like Llama 3, Mistral, and Falcon. You can configure it to point to any endpoint that exposes a standard REST or GraphQL interface for text generation.

Does using Thunderbolt require significant technical knowledge to set up? For an individual user, no. The client is designed to be a drop-in application. However, for enterprise production deployment where you are "self-hosting" the infrastructure (running the model inference engine and database locally), a DevOps engineer with experience in Python, Docker, and GPU management is highly recommended.

How does the offline SQLite database handle syncing if data changes? Since the database is local, there is no automatic syncing to the cloud. The "Source of Truth" is local to the device. However, the architecture supports structured export/import formats (likely JSON or SQLite dump). The user or an enterprise admin would manage the distribution of new datasets to other workstations, or work within a distributed local network (LAN) where a master node updates the SQLite databases on connected nodes.

What makes this better than using ChatGPT Enterprise? The primary differentiator is control. With ChatGPT Enterprise, your data is anonymized and used to improve their models—you cannot opt-out of this in most commercial tiers. With Thunderbolt, you retain 100% ownership of your data. Additionally, self-hosting allows for fine-tuning the model on proprietary company data, a capability rarely available (and expensive) via third-party APIs.

Is Thunderbolt free? Mozilla has indicated that the client is open source and free to use for personal and community projects. However, for enterprise clients requiring paid licensing and on-site deployment support, they are facilitating commercial agreements. MZLA Technologies (the company behind Thunderbird) is handling the business side to ensure sustainable operations.

🎬 Conclusion

The era of passive AI consumption is ending, and the era of sovereign agency is dawning. Mozilla’s Thunderbolt is not merely a tool; it is a manifesto for technical independence in the AI age. By focusing on the client—the interface through which intelligence touches our lives—Mozilla has identified the leverage point that large model vendors have ignored: the user experience is only as good as the data pipeline feeding it.

As we stand on the precipice of an AI-driven economy, the ability to define your own rules is the ultimate competitive advantage. Thunderbolt offers the map to that territory, inviting developers and enterprises alike to build the decentralized, open-source AI ecosystem that mirrors the freedom of the original web. The question is no longer can you automate your workflows with AI, but which AI flows through your business. The choice, finally, is yours.

Interested in diving deeper into modular AI architectures? Subscribe to the BitAI newsletter for weekly deep-dives into the technologies shaping tomorrow.

Share This Bit