AIAnthropicClaudeClaude Code

What is Claude Dreaming? The Future of Memory in Claude Managed Agents

🚀 Quick Answer

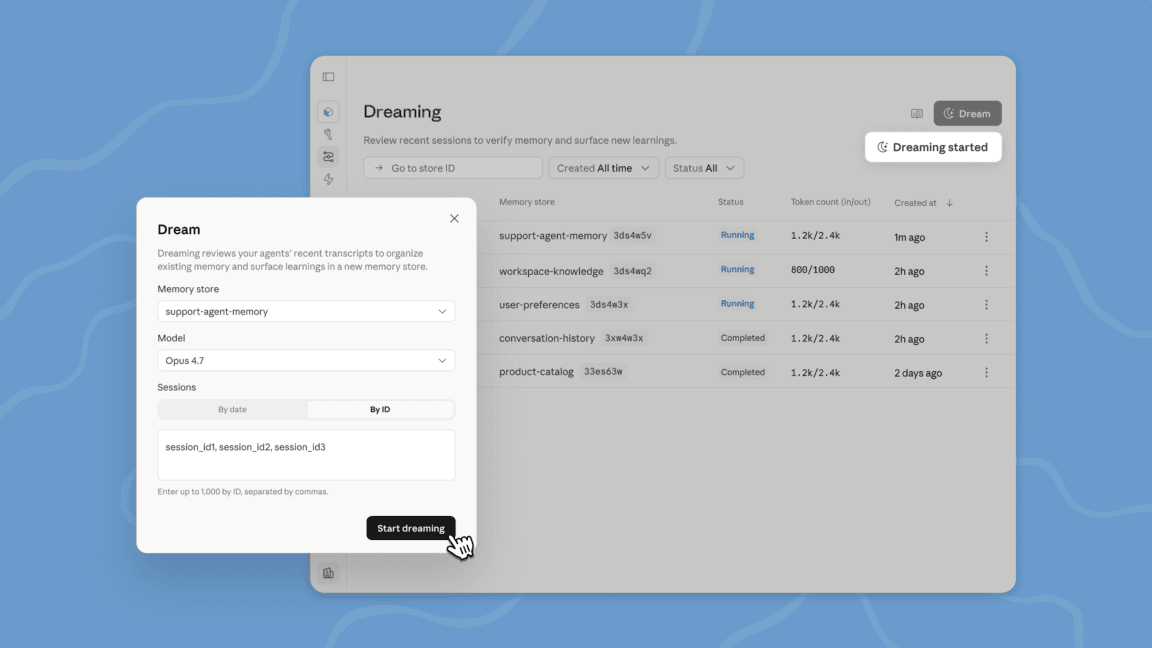

- Claude Dreaming is a scheduled research feature for Claude Managed Agents that analyzes past sessions to consolidate relevant information into long-term memory.

- It helps Claude Managed Agents solve the "context window" problem by identifying high-signal patterns across multi-agent workflows.

- Unlike standard chat memory, Dreaming allows multiple agents to share and evolve a unified knowledge base over hours or days of work.

🎯 Introduction

Anthropic just introduced a massive upgrade to Claude Managed Agents with their new Claude Dreaming feature. This isn't just another tool; it’s a fundamental shift in how Claude Managed Agents handle long-term project memory. If you rely on AI agents for complex workflows, you need to understand how "Dreaming" allows them to evolve and recall critical context weeks after a session started.

In real-world usage, LLMs forget. If a developer gives an agent a file on Monday but doesn't mention it on Tuesday, the agent often loses that context. Claude Dreaming solves this by proactively reviewing sessions to curate a "brain" that survives between conversations.

🧠 Core Explanation

To understand Claude Dreaming, you have to compare it to how current AI models manage memory.

Most Large Language Models (LLMs) operate on a "context window." This is a spotlight that illuminates text. Whether it's a chat window or an API call, the model only "holds" what you feed it or update in the current session.

What is Claude Dreaming? It is a background process that kicks off periodically. During this phase, the system looks at:

- Recent agent sessions.

- The current memory store.

- Past interactions.

It then filters out "low-signal" noise (irrelevant chatter) and structures "high-signal" information into a reusable memory format. This means an agent can learn from mistakes made days ago and apply those lessons to a new task immediately.

This is distinct from standard "compaction," which removes old text from the active display to make room for new text. Dreaming actually exploits old text to build a smarter memory layer.

🔥 Contrarian Insight

"The biggest danger of 'Dreaming' isn't that the agent forgets; it’s that it remembers too much. Human intelligence manages memory by discarding. If Anthropic's Claude Managed Agents retain insignificant patterns or minor preferences just because they appeared in a session, we risk creating 'nostalgic' AI that is bad at new tasks."

Many vendors push "better memory" as a silver bullet. However, effective memory is a sieve, not a bucket. If Dreaming doesn't have a robust ignore filter, these agents might start hallucinating preferences based on old, irrelevant context.

🔍 Deep Dive / Details

The Problem: Context Drift in Multi-Agent Workflows

When you build complex systems using Claude Managed Agents, you often spin up multiple agents. Agent A handles code generation; Agent B handles marketing copy; Agent C handles quality assurance.

In a traditional setup, memory is siloed. If Agent A fixes a bug based on specific project architecture, Agent B won't know about it unless explicitly told. Dreaming breaks these silos. It surfaces patterns that a single agent cannot see, such as:

- Recurring coding mistakes.

- Workflows agents converge on.

- Team preferences (e.g., "We always prefer TypeScript over Python").

Availability

This is currently in a research preview. It is not in the public, mass-market API stack yet. You cannot just toggle this on. If you want to play with it, you have to request access through Anthropic’s platform.

Why It Matters for Developers

From a systems perspective, this is a step toward agentic workflows. We are moving away from "Single Pass" AI (one prompt, one answer) to "Swarm Intelligence" (multiple agents working over weeks). Claude Dreaming is the glue that sticks those weeks together without choking the system on RAM.

🏗️ System Design Perspective (Simplified)

If you were to architect this behind the scenes, here is what happens during a "Dreaming" cycle:

- Trigger: A cron job or event trigger runs every X hours.

- Data Ingestion: The system pulls logs from all active Managed Agents and vectorizes the memories.

- Filtering (The "Dream" Logic): An embedding model analyzes the semantic density. Is this information distinct, or is it derivative of existing memory?

- Low Impact: Remove.

- High Impact & New: Create a new memory node.

- Refinement: Update existing nodes (e.g., correcting an older fact with new data).

- Insertion: The optimized memory graph is written to the long-term store used by all agents.

🧑💻 Practical Value / What You Should Do Next

Immediate Steps for Developers

- Request Access: Go to Anthropic's console and explicitly request access to the "Dreaming" research preview for Managed Agents.

- Evaluate Scale: If you are struggling with "Context Drift" on long-running Jupyter notebooks or continuous dev tasks, this is your solution.

- Audit Current Memory: Before flipping the switch, audit your memory store. If your data is currently garbage-in-garbage-out, Dreaming will just make the garbage more durable.

Who Should Avoid This For Now?

Don't over-engineer simple chatbots using Claude Managed Agents with Dreaming. If your agent only talks to the user once, you don't need a complex memory restructuring system. Use standard compaction first. Save Dreaming for the agents doing 6-month research projects.

⚔️ Comparison Section: Dreaming vs. Standard Context

| Feature | Standard Chat API (Single Agent) | Claude Managed Agents + Dreaming |

|---|---|---|

| Memory Scope | Single conversation session (Thread) | Cumulative history across sessions |

| Processing | Compaction (Removes text to save space) | Dreaming (Analyzes text to save value) |

| Multi-Agent | No (Each agent starts fresh) | Yes (Shared memory store) |

| Persistence | Lost when chat ends | Evolves and persists over time |

⚡ Key Takeaways

- Claude Dreaming is a scheduled memory refinement feature for Claude Managed Agents.

- It solves the context window limitation by organizing memories into high-signal patterns.

- It enables multi-agent orchestration by allowing agents to share learned workflows and preferences.

- Currently in Research Preview and limited to select unlocks.

🔗 Related Topics

- Best API Architectures for Multi-Agent Systems

- How Anthropic Scales Claude for Enterprise GPU Limits

- Understanding Claude’s 200k Context Window

- The Rise of "Agentic" AI in Software Development

🔮 Future Scope

Anthropic also announced they are doubling the 5-hour usage limit for Pro and Max subscribers. This acknowledges a bottleneck in their compute infrastructure. As Dreaming requires more compute to process session logs, expect usage limits to tighten or pricing models to evolve to pay-per-compute-cycle rather than pay-per-hour.

We can expect this to be the first of many "sleep" or "mode" features from competitors (like OpenAI’s "o1" reasoning previews or autonomous agents like Devin). The future of AI isn't just faster typing; it's smarter remembering.

❓ FAQ

Q: Is Claude Dreaming available to everyone right now? A: No. It is currently in research preview. Developers must request access to use it on Claude Managed Agents.

Q: How is Dreaming different from a database? A: A database is just storage. Dreaming is a processing layer that actively reads, filters, and organizes that storage to make it semantically relevant for future generations of AI.

Q: Will this make my Claude agents smarter? A: Only for long-running tasks. If you are just debugging a small snippet of code once a day, the benefit will be negligible.

Q: Does this update affect the standard chat interface? A: No. Dreaming is strictly tied to Claude Managed Agents and the platform, not the simple text chat website/app.

Q: Why did Anthropic double the usage limits? A: To counteract user frustration caused by compute infrastructure struggling to handle high demand. This suggests future features like Dreaming will require even more resources.

🎯 Conclusion

The introduction of Claude Dreaming marks a transition from "Chatbots" to "Agents." For developers building data-centric applications, this removes a massive architectural friction point: the fear that the AI will forget the context of the project by tomorrow.

Whether you are in the managed infrastructure game or evaluating models, keep an eye on how Anthropic balances the compute cost of memory with the value it provides. This feature is a necessary evolution for production-grade AI systems.

Share This Bit